TPAC Update on Accessibility in Digital Publishing

Today we invite guest blogger Gerardo Capiel, VP of Engineering of Benetech, who joined the Consortium to participate in the Digital Publishing Interest Group (DPUB IG). This is cross-posted on the Digital Publishing Blog.

In October of this year, Benetech joined the World Wide Web Consortium to more deeply participate in the evolution of standards that enable educational content to be born accessible. Our first opportunity for deep engagement came earlier this month with the W3C Technical Plenary and Advisory Committee (TPAC) meeting in Shenzhen, China. During the weeklong TPAC meeting, many of the W3C Working Groups that develop W3C Recommendations met to advance their projects and provide an opportunity for others to observe and inform. TPAC was also a great opportunity for face-to-face collaboration with others in the field.

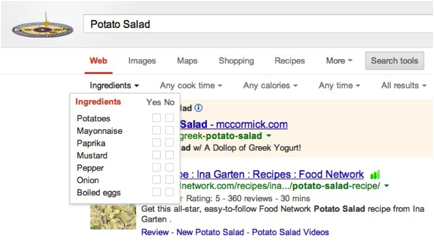

One of the people I had an opportunity to collaborate with in person was Charles McCathie Nevile, aka Chaals, who has long been involved in W3C activities and accessibility. Chaals works for Yandex one of the mainstream search engines, which leverages Schema.org. Schema.org is collaboration between Bing, Google, Yahoo and Yandex to create and support a standard set of schemas for structured data markup on web pages. These standardized schemas enable webmasters to improve the discoverability of their content. You can experience the benefits of this standardized markup by searching Google for ‘Potato Salad’, clicking ‘Search Tools’ and now having the ability to filter search results by the properties defined at http://schema.org/Recipe, such as ingredients, cook time or caloric content.

Google search filtered on Schema.org/Recipe properties, such as ingredients.

For the past year thanks to funding from the Gates Foundation Benetech has led a working group to propose a set of Schema.org accessibility properties that can be used with existing schemas to enable the discovery of accessible educational resources. The need for a standard set of properties surfaced during our participation as a launch partner for the Learning Registry in 2011. While the Learning Registry can leverage Schema.org properties defined by the Learning Resources Metadata Initiative (LRMI), such as educational alignment, there was no standard set of properties that would enable an educator to find closed-captioned videos for hearing impaired students or algebra textbooks that used MathML – an accessible format for mathematical expressions. Schema.org was the ideal place to define these properties, because they would not only benefit tools, such as the Learning Registry, but would benefit broader Open Web Platform technologies and mainstream search engines, such as Google and Yandex.

During TPAC Chaals, Markus Gylling (CTO of IDPF and co-chair of the W3C Digital Publishing Interest Group) and I were able to resolve the remaining concerns that Schema.org representatives had with the proposed properties. The following week, Dan Brickley, the editor of Schema.org, publicly announced that Schema.org would be adopting the accessibility properties proposed by the Accessibility Metadata project and IMS Global Access for All.

Soon after Dan’s announcement the IDPF updated their EPUB 3 Accessibility Guidelines to recommend to the publishing industry the use of those properties with digital textbooks and ebooks. These Guidelines have received broad support from the American Association of Publishers (AAP) and the National Federation of the Blind (NFB). I hope the Guidelines will be instrumental to the newly introduced ‘Technology, Education and Accessibility In College and Higher Education Act’ (TEACH) by U.S. Congressman Tom Petri.

At TPAC I also participated in three W3C group face-to-face meetings. The first was with the Digital Publishing Interest Group (DPUB IG), which Benetech is a member of along with Adobe, Google, Hachette Livre, IBM, Pearson and many others. The mission of the group is to provide a forum for experts in the digital publishing ecosystem for technical discussions, gathering use cases and requirements to align the existing formats and technologies needed for digital publishing with those used by the Open Web Platform. The goal is to ensure that the requirements of digital publishing can be answered, when in scope, by the Recommendations published by W3C.

Per the charter of the group Suzanne Taylor from Pearson and I put together a set of use cases related to accessibility for Digital Publishing. I had the opportunity to discuss the use cases related to image and diagram accessibility with the SVG Working Group, which is preparing the SVG 2.0 specification for final call at the end of this year. The Scalable Vector Graphic format (SVG) is a format that has been deemed the third most important feature of EPUB 3 that publishers need to adopt by the AAP EPUB 3 Implementation Project. SVG currently contains a number of mechanisms that enhance the accessibility of digital publications particularly those in the STEM field. As a result, SVG is an excellent standard to build upon to further address the needs of students with disabilities.

The first use case I discussed was SVG as a fallback and bridging solution for the accessibility of mathematical expressions. Currently, MathML is recommended as the format that publishers should use for accessibility. However, MathML adoption among traditional reading systems has been abysmal and there is no sign that it will soon improve. Google’s Chrome browser recently dropped support for MathML and MathPlayer, a popular Microsoft Internet Explorer (IE) plug-in, which is used by students with disabilities is no longer supported in IE 11. Furthermore, MathML does not work with many mainstream reading systems, such as the Kindle, and even when there is visual rendering support, there is little to no accessibility support. As a result many publishers, such as O’Reilly and Inkling, have resorted to converting mathematical expressions from MathML to SVG or PNG graphics, which either results in a loss of the information needed by blind or vision impaired (BVI) students or increases the cost to publishers of complying with accessibility requirements.

My proposal to the SVG Working Group was for the SVG 2.0 specification to support embedding MathML within SVG along with granular verbal descriptions of the expression as a lowest common denominator for assistive technology. This approach would broaden compatibility and not take away information that could be leveraged by future assistive technologies. I also recommended that SVG express explicit support for the recently drafted ARIA 1.1 describedAt property that enables MathML and corresponding verbal descriptions to also be referenced by an external URI (URL). This URL would provide access to the source MathML and alternative formats, such as Nemeth Braille, and would enable educators and disability services professionals to correct and improve MathML markup, which may render correctly visually, but poorly aurally.

The SVG group was very open and responsive to my proposal and Richard Schwerdtfeger from IBM took the first action by adding to the SVG 2.0 specification draft support for aria-describedat. With these standards in place our plans to take to market a prototyped tool for publishers and other content creators to convert MathML to described SVG become even more compelling.

Next I discussed with the SVG Working Group research and development that Benetech had undertaken to use Open Web Platform technologies to implement the sonification features of MathTrax. MathTrax is a graphing tool for blind and low vision middle and high school students to access visual math data and graph or experiment with equations and datasets.

Doug Schepers, a staff member of the W3C, demonstrated a project we had collaborated on to sonify graphs of mathematical expressions, such as a parabola, using the Web Audio API and the nascent Web Speech API supported in Safari 6.1 and the upcoming Chrome 33. We discussed that in order to generalize this approach work with SVG graphs generated by other tools, such as D3.js, we needed standard semantics to identify which SVG elements represented data and the x and y axis. Richard Schwerdtfeger suggested that new ARIA roles be enumerated for this purpose. I look forward to moving this forward with Rich.

One of the advantages of the SVG format for accessible images and graphs is that textual descriptions of the whole image or individual elements can be included within SVG. This is superior to the use of the alt property with HTML image elements, because descriptions can be lengthier than those typically used with the alt property and they are portable with the image content. Unfortunately SVG descriptions are limited to text and can’t use rich HTML markup to incorporate tables, lists or links. I recommend reading some of the recommendations by the DIAGRAM Center on the use of structured elements for image descriptions, particularly in the STEM field.

I was in luck with this use case as the SVG Working Group was already considering supporting HTML within SVG 2.0. My use case was further confirmation that SVG could benefit from this support and an action was created specific to this use case.

Because we need a way to deal with back titles that don’t leverage these standards for rich image descriptions or have insufficient descriptions, I discussed the need for mechanisms to support the crowdsourcing or post-production addition of image descriptions to titles that have already been published. Previously I discussed that ARIA 1.1′s describedAt property is one enabling standard that tools, such as DIAGRAM Center’s Poet can leverage. Markus Gylling and I also believe that the emergent Open Annotations specification provides another approach. The good news is that Doug Schepers from the SVG Working Group believes this will be possible based on his talks with the developers at Hypothes.is who are building one of the first tools to leverage the Open Annotations specification.

Besides aural and textual modalities, the content of SVG graphs can also be conveyed via tactile modalities. Educators to blind and visually impaired students have commonly used tactile graphics to make graphical content accessible. SVG is the recommended digital format for creating tactile graphics that can be printed with specialized printers called embossers. These specialized printers are expensive, thus the DIAGRAM Center and others have been funding and conducting research on the use of 3D printers for making 2D SVG graphics accessible. Given MakerBot’s recently announced mission to put a 3D printer in every classroom, this is very exciting.

Haptics are another promising technology for making SVG graphics accessible via a tactile modality. The DIAGRAM Center recently partnered with ETS to research tablet-based haptic display of graphical information and explore the inclusion of this technology in EPUB 3 textbooks.

Ideally the same SVG graphic could be printed with ink, an embosser or a 3D printer. Based on discussions with SVG Working Group we determined that a solution could be to leverage CSS media queries, which today are used to format web pages for print. An action item was taken for Doug Schepers and Tab Atkins who also sits on the CSS Working Group to work on tactile, 3D and haptic media queries for SVG.

Finally I presented to the SVG Working Group the need for SVG images to be easily reusable within a document and across documents without the need for the HTML image element, which limits much of the advanced capabilities of SVG. The SVG Working Group recommended that iframes be used to inline the same SVG graphic across multiple locations in a document. This seems like a reasonable approach and I will be further discussing it with our DIAGRAM Center partners, the DPUB IG and developers of assistive technologies.

I was quite pleased with how open and curious the SVG Working Group was to issues around accessibility. This was exactly the type of collaboration that the Digital Publishing Interest Group was meant to create.

My last day at TPAC was spent with the W3C Independent User Interface (Indie UI) Working Group. Their mission is to facilitate interaction in Web applications that are input method independent, and hence accessible to people with disabilities. For example currently Web application authors, wishing to intercept a user’s intent to ‘zoom in’ on a map view, need to ‘listen’ for a wide range of events that vary by operating system or device, such as CTRL-Plus, Command-Plus, pinch/zoom touch gestures, etc. Assistive technologies further expand and complicate the possible interactions that web developers must account for.

Ideally, content and applications automatically adapt to the users preferences. To make this possible the Indie UI team is also drafting a user context specification. The Indie UI team was very interested in learning about our work with Schema.org to specify accessibility properties. One use case enabled by leveraging both of these specifications is applications that automatically narrow search results for users based on their preferences. For example if the user context indicated that the user had a preference for videos with captions, then a search query could automatically narrow results to videos with captions.

I’m very excited to see all these standards coming together to enable a born accessible future and reaping its benefits. I’d like to thank the W3C for putting on a great event, which crystallized for me the importance of the W3C’s mission.

Comments (0)

Comments for this post are closed.