Multimodal Interaction Working Group Charter

The mission of the Multimodal Interaction Working Group, part of the Multimodal Interaction Activity, is to develop open standards that enable the following vision:

- Extending the Web to allow multiple modes of interaction:

- GUI, Speech, Vision, Pen, Gestures, Haptic interfaces, ...

- Anyone, Anywhere, Any device, Any time

- Accessible through the user's preferred modes of interaction with services that adapt to the device, user and environmental conditions

| End date | 31 March 2014 |

|---|---|

| Confidentiality | Proceedings are Member-only, but the group sends regular summaries of ongoing work to the public mailing list. |

| Initial Chairs | Deborah Dahl |

| Initial Team Contacts (FTE %: 20) |

Kazuyuki Ashimura |

| Usual Meeting Schedule | Teleconferences: Weekly (1 main group call

and 3 task force calls) Face-to-face: as required up to three per year |

Scope

Background

The primary goal of this group is to develop W3C Recommendations that enable multimodal interaction with various devices including desktop PCs, mobile phones and less traditional platforms such as cars and intelligent home environments including digital TVs/connected TVs. The standards should be scalable to enable richer capabilities for subsequent generations of multimodal devices.

Users will be able to provide input via speech, handwriting, motion or

keystrokes, with output presented via displays, pre-recorded and synthetic

speech, audio, and tactile mechanisms such as mobile phone vibrators and

Braille strips. Application developers will be able to provide an effective

user interface for whichever modes the user selects. To encourage rapid

adoption, the same content can be designed for use on both old and new devices.

The capability of possible multimodal access depends on the devices used. For

example, users of multimodal devices which include not only keypads but also

touch panel, microphone and motion sensor can enjoy all the possible

modalities, while users of devices with restricted capability prefer simpler

and lighter modalities like keypads and voice.Extensibility to new modes of

interaction is important, especially considered in the context of the current

proliferation of types of sensor input, for example, in biometric

identification and medical applications.

Target Audience

The target audience of the Multimodal Interaction Working Group (member only link) are vendors and service providers of multimodal applications, and should include a range of organizations in different industry sectors like:

- Mobile and hand-held devices

- As a result of increasingly capable networks, devices, and speech

recognition technology, the number of existing multimodal applications,

especially mobile applications, is rapidly accelerating. Multimodal

Voice Search in particular is a relatively new and

compelling use case, and has been implemented in applications by a number

of companies, including Google, Microsoft, Yahoo, Vlingo,

SpeechCycle, Novauris, AT&T, Openstream, Vocalia,

Metaphor Solutions and Sound Hound. Speech offers a

welcome means to interact with smaller devices, allowing one-handed and

hands-free operation. Users benefit from being able to choose which

modalities they find convenient in any situation. The Working Group

should be of interest to companies developing smart phones and personal

digital assistants or who are interested in providing tools and

technology to support the delivery of multimodal services to such

devices.

Please note that a related effort has recently been initiated in the W3C by the HTML Speech Incubator Group (HTML Speech XG). The focus of the XG is developing proposals for accessing speech recognition and speech synthesis from HTML5 browsers, and Voice Search and Speech Command Interfaces are possible use cases for these technologies in the browser. However, the XG does not attempt to address modalities other than speech, such as handwriting, emotion, or the wide variety of present and future input modalities. Similarly, it doesn't attempt to address non-browser contexts. In contrast, the Multimodal Architecture provides a generic framework for modality integration and control. Speech in the browser can be seen as a special case of the kind of modality integration covered by the MMI Architecture. The Multimodal Interaction Working Group has been collaboratively working with the XG, and will continue to liaise with them on topics of common interest. For example, the XG has adopted EMMA as a speech recognition result format. - Home appliances, e.g., TV, and home networks

- Multimodal interfaces are expected to add value to remote control of home entertainment systems, as well as finding a role for other systems around the home. Companies involved in developing embedded systems and consumer electronics should be interested in W3C's work on multimodal interaction.

- Enterprise office applications and devices

- Multimodal has benefits for desktops, wall mounted interactive displays, multi-function copiers and other office equipment which offer a richer user experience and the chance to use additional modalities like speech and pens to existing modalities like keyboards and mice. W3C's standardization work in this area should be of interest to companies developing client software and application authoring technologies, and who wish to ensure that the resulting standards live up to their needs.

- Intelligent IT ready cars

- With the emergence of dashboard integrated high resolution color displays for navigation, communication and entertainment services, W3C's work on open standards for multimodal interaction should be of interest to companies working on developing the next generation of in-car systems.

- Medical applications

- Mobile healthcare professionals and practitioners of telemedicine will

benefit from multimodal standards for interactions with remote patients

as well as for collaboration with distant colleagues.

Work to do

- Multimodal Architecture and Interfaces (MMI Architecture)

- Bring the MMI Architecture to Recommendation: finalize generic architecture and define profiles for using the MMI Architecture with specific use cases

To assist with realizing this goal, the Multimodal Interaction Working Group is tasked with providing a loosely coupled architecture for multimodal user interfaces, which allows for co-resident and distributed implementations, and focuses on the role of markup and scripting, and the use of well defined interfaces between its constituents. The framework is motivated by several basic design goals including (1) Encapsulation, (2) Distribution, (3) Extensibility, (4) Recursiveness and (5) Modularity.

Where practical this should leverage existing W3C work. See the list of dependencies for the information of related groups.

The Working Group may expand the Multimodal Architecture and Interfaces document and include exploration of some language definition which would make it easier to adapt existing I/O devices and software, e.g., an MRCP-enabled ASR engine, to interact with the framework of the life cycle events defined in the architecture.

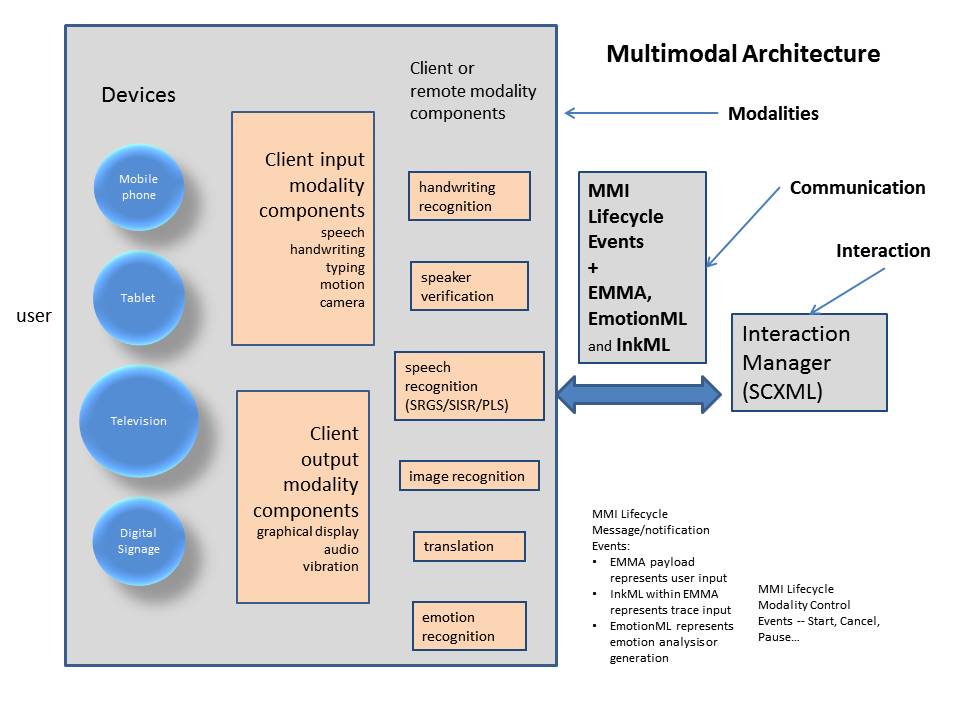

- As the diagram below shows, the Multimodal Architecture includes

Modality Components, which process specific modalities such as speech or

handwriting, an Interaction Manager, which coordinates processing among

the Modality Components, and the Life Cycle events, which support

communication between the Interaction Manager and the Modality

Components. Modality-specific processing takes place on one or more

devices, or remotely. A Life Cycle Event can carry control information or

user input. When a Life Cycle Event carries user input as a payload, it

uses EMMA, a generic, modality-independent format for representing user

input. Within EMMA, the user input can be represented, for example, as

EmotionML, InkML, or the semantically interpreted results of speech

recognition, depending on the input modality. The Multimodal Architecture

also makes use of related standards, as appropriate, such as SRGS, SISR,

PLS, and SCXML, developed by the W3C Voice Browser Working Group.

- Modality Components Registration & Discovery

The Working Group will investigate and recommend how various Modality Components in the MMI Architecture which are responsible for controlling the various input and output modalities on various devices can register their capabilities and existence with the Interaction Manager. The possible examples of registration information of Modality Components include capabilities of Modality Components such as Languages supported, ink capture and playback, media capture and playback (audio, video, images, sensor data), speech recognition, text to speech, SVG, geolocation and emotion.

It should be noted here that any single modality component in the MMI architecture can potentially encompass multiple physical devices, also more than one modality components could be included in a single device. Given that distinction, the focus of this group is to investigate and document the process for discovery of modality components and their capabilities rather than that for discovery of devices and services, which may have been addressed by other working groups, e.g., the Device APIs Working Group. The Multimodal Interaction Working Group itself will not define any JavaScript APIs for device discovery or registration.

The group will generate a document which describes the detailed definition of the format and process of Registration of Modality Components as well as the process for the Interaction Manager to discover the existence and current capabilities of Modality Components. This document is expected to become a recommendation track document.

- Device handling capability

Clear and concrete description on how to handle device interaction within the context of the MMI Architecture is expected. For example device vendors need to know how to integrate the MMI Architecture with existing device handling standards like DLNA and Echonet. So the group will consider to publish guidelines for integrating the MMI Architecture and existing device handling standards. Depending on the expertise of WG participants, the group may prepare a Working Group Note on how to integrate existing device handling standards, e.g., DLNA, and the MMI Architecture. The results could be included in the EMMA 2.0 specification. Also there is a possibility a specific language for CE device handling Modality Component, e.g., Device Handling Markup Language (DHML), will be defined. Please note that there is the Device APIs Working Group which works to standardize client-side APIs that enable the development of Web Applications on browsers and Web Widgets. However, the scope of the Multimodal Interaction Working Group is not only handling device capability within a single client device but also dealing with interaction among independent/multiple devices as Modality Components. The Multimodal Interaction Working Group will liaise with groups that work for device capability including the Device APIs Working Group to discuss what kind of features should be considered not only from MMI viewpoint but also from the other groups' viewpoints.

- Extensible Multi-Modal Annotations (EMMA)

EMMA is a data exchange format for the interface between different levels of input processing and interaction management in multimodal and voice-enabled systems. It provides the means for input processing components, such as speech recognizers, to annotate application specific data with information such as confidence scores, time stamps, and input mode classification (e.g. key strokes, touch, speech, or pen). EMMA also provides mechanisms for representing alternative recognition hypotheses including lattice and groups and sequences of inputs. EMMA is a target data format for the semantic interpretation specification developed in the W3C Voice Browser Activity, which defines augmentations to speech grammars that allow extraction of application specific data as a result of speech recognition. EMMA supersedes earlier work on the natural language semantics markup language (NLSML) in the Voice Browser Activity.

EMMA Ver. 1.0 became a W3C Recommendation in February 2009 and it has been implemented by more than 10 different companies and institutions. In the period defined by this charter, the group will actively maintain and address any issues that arise with the existing EMMA 1.0 specification. The group will publish a new EMMA 1.1 version of the specification which incorporates new features that address issues brought up through EMMA implementations:

- Support for a broader range of semantic representation formats

- Revisions to the grammar mechanism to enable inline specification of grammars and specification of both active and matching grammars

- Clarification of medium and mode annotations and extensions to align EMMA with EmotionML

The group will publish a new requirements document for more extensive features that have been requested such as:

- Representation of multimodal outputs for information presentation and embodied conversational agents

- Extended support for multimodal corpora annotation and logging

- Biometric processing

The group may publish an updated 2.0 version of the EMMA specification with the above capabilities.

- EmotionML

Bring Emotion Markup Language to Recommendation.

EmotionML provides representations of emotions for technological applications in three different areas: (1) manual annotation of data; (2) automatic recognition of emotion-related states from user behavior; and (3) generation of emotion-related system behavior.

EmotionML has progressed very well in the previous charter. It has received positive feedback at the W3C Workshop on Emotion Markup Language.

As the workshop minutes says, there were the following participants in the workshop:

- Cantoche

- Deutsche Telekom

- DFKI

- Dublin Institute of Technology

- Keio University

- Loquendo

- nViso

- Queens University of Belfast

- Roma Tre University

- Telecom ParisTech

- University of Greenwich

And all of them were interested in adding support for EmotionML in their products and/or research prototypes in the following domains:

- Affective monitoring / emotion detection to verify customer reactions to advertisements;

- Expressive speech synthesis, generating synthetic speech with different emotions;

- Generation of expressive behavior for animated virtual characters displayed on web pages;

- Support for annotating emotions in audio and video resources using crowdsourcing platforms;

- Opinion mining / sentiment analysis from text;

- Modeling and induction of of group emotion in disaster recovery training sessions;

In addition to these plans, EmotionML also has the potential to support applications for people with disabilities, such as educational programs for people with autism and "emotion captioning" for people who are unable to hear emotions expressed by voice or who are unable to see emotions expressed on the face.

In order to assess the potential impact of emotion annotation for the web, the group intends to publish a use cases and requirements document on making emotion annotation usable in web browsers.

- Maintenance work

The Working Group will be maintaining the InkML and EMMA specifications; that is, responding to questions and requests on the public mailing list and issuing errata as needed. The Working Group will also consider publishing additional versions of the specifications, depending on such factors as feedback from the user community and any requirements generated for EMMA and InkML by the Multimodal Architecture and Interfaces work and EmotionML.

Success Criteria

For each document to advance to proposed Recommendation, the group will produce a technical report with at least two independent and interoperable implementations for each required feature. The working group anticipates two interoperable implementations for each optional feature but may reduce the criteria for specific optional features.

Deliverables

The following documents are expected to become W3C Recommendations:

- Multimodal Architecture and Interfaces (currently LCWD)

- Emotion Markup Language (EmotionML) (currently LCWD)

- EMMA: Extensible MultiModal Annotation markup language Version 1.1 (currently Editor's Draft)

- Modality Components Registration & Discovery

The following document is a Working Group Note and is not expected to advance toward Recommendation:

- MMI Architecture Interoperability Test Report Note (currently Editor's Draft)

Milestones

This Working Group is chartered to last until 31 July 2013.

Here is a list of milestones identified at the time of re-chartering. Others may be added later at the discretion of the Working Group. The dates are for guidance only and subject to change.

| Note: See changes from this initial schedule on the group home page. | |||||

| Specification | FPWD | LC | CR | PR | Rec |

|---|---|---|---|---|---|

| Multimodal Architecture and Interfaces | Completed | Completed | November 2011 | 3Q 2012 | 1Q 2013 |

| EMMA 1.1 | November 2011 | 3Q 2012 | 1Q 2013 | 4Q 2013 | 4Q 2013 |

| Modality Components Registration & Discovery | 1Q 2012 | 4Q 2012 | 2Q 2013 | 4Q 2013 | 1Q 2014 |

| EmotionML | Completed | Completed | March 2012 | September 2012 | November 2012 |

Dependencies

W3C Groups

These are W3C activities that may be asked to review documents produced by the Multimodal Interaction Working Group, or which may be involved in closer collaboration as appropriate to achieving the goals of the Charter.

- Cascading Style Sheets

- styling for multimodal applications

- Device APIs WG

- client-side APIs for developing Web Applications and Web Widgets that interact with devices services

- Geolocation WG

- handling geolocation information of devices

- HTML Speech XG

- integrating speech technology in HTML5

- HTML WG

- HTML5 browser as Graphical User Interface

- Hypertext Coordination Group

- The Working Group will also liaise with the Web Application Formats Working Group via the Hypertext Coordination Group

- Internationalization

- support for human languages across the world

- Mobile Web Initiative

- browsing the Web from mobile devices

- Scalable Vector Graphics

- graphical user interfaces

- Semantic Web

- the role of metadata

- SYMM WG

- timing model for multimedia presentations

- Timed Text

synchronized text and video

The MMI Architecture may include a video content server as a Modality Component, so collaboration on how to handle a Modality Component for video service would be beneficial to both groups.

The Working Group may cooperate with two other Working Groups ( Media Fragments, Media Annotations) in the Video in the Web activity as well.

- Voice Browsers

- voice interfaces

- WAI Protocols and Formats

- ensuring accessibility for Multimodal systems

- WAI User Agent Accessibility Guidelines Working Group

- accessibility of user interface to Multimodal systems

- Web and TV

- TV and other CE devices as Modality Components for Multimodal systems

- Web Applications

- Specifications for webapps, including standard APIs for client-side development, and a packaging format for installable webapps

- Web Services

- application messaging framework

- Forms

- separating forms into data models, logic and presentation

- XML Protocol

- XML based messaging

- Math

- InkML includes subset of MathML functionalities with the <mapping> element

Furthermore, Multimodal Interaction Working Group expects to follow these W3C Recommendations:

- QA Framework: Specification Guidelines.

- Character Model for the World Wide Web 1.0: Fundamentals

- Architecture of the World Wide Web, Volume I

External Groups

This is an indication of external groups with complementary goals to the Multimodal Interaction activity. W3C has formal liaison agreements with some of them, e.g. VoiceXML Forum.

- 3GPP

- protocols and codecs relevant to multimodal applications

- DLNA

- open standards and widely available industry specifications for entertainment devices and home network

- ETSI

- work on human factors and command vocabularies

- IETF

- SpeechSC working group has a dependency on EMMA for the MRCP v2

specification.

- ISO/IEC JTC 1/SC 37 Biometrics

- user authentication in multimodal applications

- OASIS BIAS Integration TC

- defining methods for using biometric identity assurance in

transactional Web services and SOAs

having initial discussion on how EMMA could be used as a data format for biometrics - VoiceXML Forum

- an industry association for VoiceXML

Participation

To be successful, the Multimodal Interaction Working Group is expected to have 10 or more active participants for its duration. Effective participation in the Multimodal Interaction Working Group is expected to consume one work day per week for each participant; two days per week for editors. The Multimodal Interaction Working Group will also allocate the necessary resources for building Test Suites for each specification.

In order to make rapid progress, the Multimodal Interaction Working Group consists of several subgroups, each working on a separate document. The Multimodal Interaction Working Group members may participate in one or more subgroups.

Participants are reminded of the Good Standing requirements of the W3C Process.

Experts from appropriate communities may also be invited to join the working group, following the provisions for this in the W3C Process.

Working Group participants are not obligated to participate in every work item, however the Working Group as a whole is responsible for reviewing and accepting all work items.

For budgeting purposes, we may hold up to three full group face-to-face meetings per year if we believe them to be beneficial. The Working Group anticipate holding a face-to-face meeting in association with the technical plenary. The Chair will make Working Group meeting dates and locations available to the group in a timely manner according to the W3C Process. The Chair is also responsible for providing publicly accessible summaries of Working Group face to face meetings, which will be announced on www-multimodal@w3.org.

Communication

This group primarily conducts its work on the Member-only mailing list w3c-mmi-wg@w3.org (archive). Certain topics need coordination with external groups. The Chair and the Working Group can agree to discuss these topics on a public mailing list. The archived mailing list www-multimodal@w3.org is used for public discussion of W3C proposals for Multimodal Interaction Working Group and for public feedback on the group's deliverables.

Information about the group (deliverables, participants, face-to-face meetings, teleconferences, etc.) is available from the Multimodal Interaction Working Group home page.

All proceedings of the Working Group (mail archives, teleconference minutes, face-to-face minutes) will be available to W3C Members. Summaries of face-to-face meetings will be sent to the public list.

Decision Policy

As explained in the Process Document (section 3.3), this group will seek to make decisions when there is consensus. When the Chair puts a question and observes dissent, after due consideration of different opinions, the Chair should record a decision (possibly after a formal vote) and any objections, and move on.

This charter is written in accordance with Section 3.4, Votes of the W3C Process Document and includes no voting procedures beyond what the Process Document requires.

Patent Policy

This Working Group operates under the W3C Patent Policy (5 February 2004 Version). To promote the widest adoption of Web standards, W3C seeks to issue Recommendations that can be implemented, according to this policy, on a Royalty-Free basis.

For more information about disclosure obligations for this group, please see the W3C Patent Policy Implementation.

About this Charter

This charter for the Multimodal Interaction Working Group has been created according to section 6.2 of the Process Document. In the event of a conflict between this document or the provisions of any charter and the W3C Process, the W3C Process shall take precedence.

The most important changes from the previous charter are:

- reduced Team staff resource (FTE %) from 30 to 20

- added description on the relationship with the HTML Speech Incubator Group

- removed EMMA 2.0 from the deliverables, and added EMMA 1.1 instead

- removed InkML from the deliverables because it has become a W3C Recommendation

- removed Multimodal Authoring from the deliverables, and added MMI Architecture Interoperability Test Report Note instead

- removed Modality Components Definition Notes from the deliverables, and added Modality Components Registration & Discovery instead

- updated the list of related organizations in dependencies

On 7 November 2013, this charter was extended until 31 March 2014.

Deborah Dahl, Chair, Multimodal Interaction Working Group

Kazuyuki Ashimura, Multimodal Interaction Activity Lead

Copyright © 2013 W3C ® (MIT, ERCIM, Keio, Beihang) Usage policies apply.

$Date: 2025/10/03 06:11:47 $