SIOC/Implementations

This page serves as an overview over existing applications that use or implement SIOC.

Check against recent updates listed at State of the SIOC-o-sphere (#4). Possibly add SIOC for phpBB, IKHarvester, Tom Morris' Twitter2RDF, Fishtank, Tuukka Hastrup's IRC2RDF, BAETLE, etc.

Note for wiki editors: Many subsections of this page use transclusion to display short teasers of the applications' actual wiki pages.

Data Sources

SPARQL Endpoints

These are solutions that expose SIOC Data via SPARQL Endpoints.

OpenLink Data Spaces (ODS) Endpoints

OpenLink Data Spaces/Sparql Endpoint

Exporters

These are solutions that export plain old RDF graphs of SIOC Data.

SIOC plugins export the information about the content and structure of blog and forum sites in a machine readable form. Here we list all the existing SIOC export plugins and tools.

Such plugins or exporters can be developed for any blog engine that you want to export the information from. Plugins can be built on their own or can use a SIOC Export API, the latter being the recommended approach.

Most of the exporters implement a SIOC auto-discovery mechanism which allows us to identify webpages that contain SIOC metadata using automated tools such as the Semantic Radar plugin for Firefox .

WordPress

DotClear

Drupal

b2evolution

Talk Digger

SWAML

OpenLink Data Spaces (ODS)

vBulletin

APIs

We aim to overcome a "chicken-and-egg" problem (no applications without data, and no data without applications) by making it easy to generate and use SIOC data. Most blog and forum engines already have RSS export functionality and the majority of them are based on open source software or can be extended by using plugins, it is straightforward to modify existing export functions or create plugins to generate metadata conforming to the SIOC ontology.

A SIOC Export API makes the development of such SIOC plugins and exporters as easy as possible as developers don't have to change their plugin as the SIOC format evolves - all the changes are made once, at the API level. In either case, the exporter or plugin uses the internal logic (functions, classes, etc.) of the blog engine or else direct database access to get information about the weblog, and then uses the SIOC API or custom code to produce SIOC export data.

At the moment PHP and Perl APIs are available. There is a need for SIOC export APIs in other languages, such as Ruby On Rails (as used in the Typo blog engine).

PHP

Java

A SIOC API for Java has been created, based on semweb4j. For each object in the SIOC ontology, this API generates classes with links between the objects realised as Java properties.

Perl

Version 1.0 of a SIOC API for Perl has been released on CPAN. The CPAN page for the SIOC API is here. A description of the project itself is available here in German.

Data Consumers

Crawlers

Browsers - Overview

Since people can quite easily create SIOC data from their weblog using the described exporters or the API introduced in the previous chapter, we will now consider different ways to query and view this data.

SIOC browser allow people to browse and receive additional information from SIOC data sources or data stores. Browsers can work in two modes - on-the-fly mode and crawler mode - or can use a combination of both. The former displays the SIOC data received from a community site (thus providing a uniform interface to all SIOC-enabled sites) while the latter stores SIOC data in a repository, allowing one to make more complicated queries via the use of SPARQL, the leading Semantic Web query language.

SIOC data can also be displayed in a graphical format – as a timeline of content posts or as a graphical network of relations between sites, posts and topics. These graphical interfaces offer another user-friendly way to view SIOC data and the crawler mode browser may be extended to provide both a text and graphical view of the same information. Moreover, as SIOC offers an open data format using a formal description in RDF, everyone can create their own tools as appropriate to their needs. Thus, the browsers provided here are an example of what can be done with SIOC, and give a nice overview of its different capabilities.

SIOC Browser

Buxon

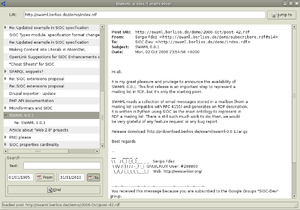

On SWAML 0.0.3 was released a sioc:Forum browser called Buxon (now it is an independent package ).

Written in PyGTK, it reads a sioc:Forum RDF files and show it as a tree by threads, a screenshot can be seen in Figure 5.

SIOC Explorer

Siocwave

Importers

Wordpress Importer

Utilities

Semantic Radar

Ping the Semantic Web

PingTheSemanticWeb.com is a web service archiving the location of recently created/updated RDF documents on the Web. If one of those documents is updated, its author can notify the service that the document has been updated by pinging it with the URL of the document.

PingtheSemanticWeb.com is used by crawlers or other types of software agents to know when and where the latest updated RDF documents can be found. So they request a list of recently updated documents as a starting location to crawl the Semantic Web.

You can use the URL of an HTML or RDF document when pinging PingtheSemanticWeb.com web service. If the service finds that the URL points to an HTML document, it will check if it can find a link to a RDF document. If it finds one, it will follow the link and check the RDF document to see if SIOC, DOAP and/or FOAF elements are defined in the document. If the service found that the RDF document has SIOC, DOAP and/or FOAF elements, it will archive the ping and make it available to crawlers in the export files. Otherwise it will archive it in the general RDF pings list.