Sir Tim’s dream … in the building industry?

DREAM – 2001

“I have a dream for the Web [in which computers] become capable of analyzing all the data on the Web – the content, links, and transactions between people and computers.”

I saw in this dream an opportunity to really improve the building industry and eventually came up with the basics for the scenario described below. It seems to me that W3C works well with Big Data collected by large institutions and businesses whereas most small and medium sized enterprises are concerned with dealing with large amounts of small data in different formats and from different sources. The dream does not really work unless all the data on the web can be analysed and the dream cannot be realised unless those who know what data is needed are intimately involved with its selection and analysis.

So my idea of posting the scenario is see if there is any support for the development of what I suggest or whether there are other suggestions how to enable building industry computers (among others) to analyse data on the web. Personally I feel we owe it to all the Internet Pioneers to use their gifts to automate much more for much more of society.

SCENARIO

John is part of the design team for a large condominium.

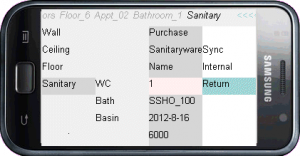

He uses his phone to upload the project file to the registry so that he can use its assembler to create a machine in his phone’s display.

He is in the studio so he can more conveniently display the machine’s output on the big screen on the wall. He wants to double check on his selections for sanitary-ware in a typical bathroom.

He had previously marked up those values returned by the registry’s analyzer that were clearly not appropriate and had run quite a number of reruns on the rest adjusting criteria for price, material, delivery times and sustainability parameters before settling on a combination that seemed to work well enough within the space, budget, quality expected by the developer and his company’s reputation for good design.

REGISTRATION

This scenario relies on data and options being collected from multiple sources. Collection is via market-oriented registration rather than algorithms. Processing is by freely available universal online assemblers and analyzers rather than software applications.

The assembler is a simple application that accepts a ‘json’ specially punctuated plain text file, converts it into hyperlinks that automatically arrange themselves after each selection in accordance with associations set in the file, and returns the amended file at session end. Although made with the same code as a web page the display is more machine than document, just as an eBanking web page is as much part of a banking machine as the screen of an ATM.

Rather than return thousands of web site addresses, the analyzer (being developed) extracts and returns unique options from data links provided by its registrants. The analyzer inherently builds and grades lists of prompts and options used in particular contexts within the industry. The lists are used for registration as well as searching, filtering and flagging returns.

Pingback: Open Email to Phil Archer, Data Activity Lead | Community and Business Groups Forum