C.3.2.1.1 Temporal

Playing temporal fragments out-of-context

SMIL allows you to play only a fragment of the video by using the clipBegin and clipEnd atributes. How this is implemented, though, is out of scope for the SMIL spec (and for http-based URLs it may well mean that implementations get the whole media item and cut it up locally):

<video xml:id="toc1" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" clipBegin="12.3s" clipEnd="21.16s" />

It is possible to use different time schemes, which give frame-accurate clipping when used correctly:

<video xml:id="toc2" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" clipBegin="npt=12.3s" clipEnd="npt=21.16s" />

<video xml:id="toc3" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" clipBegin="smpte=00:00:12:09" clipEnd="smpte=00:00:21:05" />

Adding metadata to such a fragment is supported since SMIL 3.0:

<video xml:id="toc4" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" clipBegin="12.3s" clipEnd="21.16s">

<metadata>

<rdf:.... xmlns:rdf="....">

....

</rdf:...>

</metadata>

</video>

Referring to temporal fragments in-context

The following piece of code will play back the whole video, and during the interesting section of the video allow clicking on it to follow a link:

<video xml:id="tic1" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" >

<area begin="12.3s" end="21.16s" href="http://www.example.com" />

</video>

It is also possible to have a link to the relevant section of the video. Suppose the following SMIL code is located in http://www.example.com/smilpresentation:

<video xml:id="tic2" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" >

<area xml:id="tic2area" begin="12.3s" end="21.16s"/>

</video>

Now, we can link to the media fragment using the following URI:

http://www.example.com/smilpresentation#tic2area

Jumping to #tic2area will start the video at the beginning of the interesting section. The presentation will not stop at the end, however, it will continue.

C.3.2.1.2 Spatial

Playing spatial fragments out-of-context

SMIL 3.0 allows playing back only a specific rectangle of the media. The following construct will play back the center quarter of the video:

<video xml:id="soc1" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" panZoom="25%, 25%, 50%, 50%"/>

Assuming the source video is 640x480, the following line plays back the same:

<video xml:id="soc2" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" panZoom="160, 120, 320, 240" />

This construct can be combined with the temporal clipping.

It is possible to change the panZoom rectangle over time. The following code fragment will show the full video for 10 seconds, then zoom in on the center quarter over 5 seconds, then show that for the rest of the duration. The video may be scaled up or centered, or something else, depending on SMIL layout, but this is out of scope for the purpose of this investigation.

<video xml:id="soc3" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" panZoom="0, 0, 640, 480" />

<animate begin="10s" dur="5s" fill="freeze" attributeName="panZoom" to="160, 120, 320, 240 />

</video>

Referring to spatial fragments in-context

The following bit of code will enable the top-right quarter of the video to be clicked to follow a link. Note the difference in the way the rectangle is specified (left, top, right, bottom) when compared to panZoom (left, top, width, height). This is an unfortunate side-effect of this attribute being compatible with HTML and panZoom being compatible with SVG.

<video xml:id="tic1" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" >

<area shape="rect" coords="50%, 0%, 100%, 50%" href="http://www.example.com" />

</video>

Other shapes are possible, as in HTML and CSS. The spatial and temporal constructs can be combined. The spatial coordinates can be animated, as for panZoom.

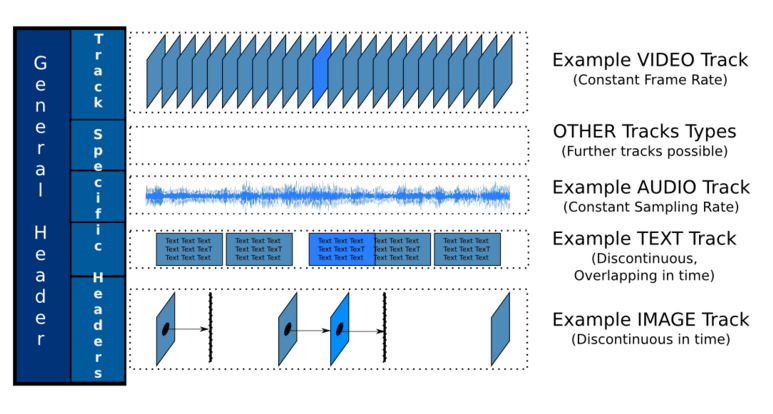

C.3.2.1.3 Track

SMIL has no way to selectively enable or disable tracks in the video. It only provides a general parameter mechanism which could conceivaby be used to comminucate this information to a renderer, but this would make the document non-portable. Moreover, no such implementations

are known.

<video xml:id="st1" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" >

<param name="jacks-remove-track" value="audio" />

</video>

C.3.2.1.4 Named

SMIL has no way to show named fragments in the base material out-of-context. It has no support for referring to named fragments in-context either, but it does have support for referring to "media markers" (named points in time in the media) if the underlying media formats supports them. Yet, no such implementations are known:

<video xml:id="nf1" src="http://www.w3.org/2008/WebVideo/Fragments/media/fragf2f.mp4" >

<area begin="nf1.marker(jack-frag-begin)" end="nf1.marker(jack-frag-end)" href="http://www.example.com" />

</video>