1. Introduction

As Virtual Reality and Augmented Reality becomes more prevalent, new features are being introduced by the native APIs that enable accessing more detailed information about the environment in which the user is located. Depth Sensing API brings one such capability to WebXR Device API, allowing authors of WebXR-powered experiences to obtain information about the distance from the user’s device to the real world geometry in the user’s environment.

This document assumes readers' familiarity with WebXR Device API and WebXR Augmented Reality Module specifications, as it builds on top of them to provide additional features to XRSessions.

1.1. Terminology

This document uses the acronyms AR to signify Augmented Reality, and VR to signify Virtual Reality.

This document uses terms like "depth buffer", "depth buffer data" and "depth data" interchangably when referring to an array of bytes containing depth information, either returned by the XR device, or returned by the API itself. More information about the specific contents of the depth buffer can be found in data and texture entries of the specification.

This document uses the term normalized view coordinates when referring to a coordinate system that has an origin in the top left corner of the view, with X axis growing to the right, and Y axis growing downward.

2. Initialization

2.1. Feature descriptor

The applications can request that depth sensing be enabled on an XRSession by passing an appropriate feature descriptor. This module introduces new string - depth-sensing, as a new valid feature descriptor for depth sensing feature.

A device is capable of supporting the depth sensing feature if the device exposes native depth sensing capability. The inline XR device MUST NOT be treated as capable of supporting the depth sensing feature.

The depth sensing feature is subject to feature policy and requires "xr-spatial-tracking" policy to be allowed on the requesting document’s origin.

2.2. Intended data usage and data formats

enum {XRDepthUsage "cpu-optimized" ,"gpu-optimized" , };

-

An usage of

"cpu-optimized"indicates that the depth data is intended to be used on the CPU, by interacting withXRCPUDepthInformationinterface. -

An usage of

"gpu-optimized"indicates that the depth data is intended to be used on the GPU, by interacting withXRWebGLDepthInformationinterface.

enum {XRDepthDataFormat "luminance-alpha" ,"float32" };

-

A data format of

"luminance-alpha"indicates that items in the depth data buffers obtained from the API are 2-byte unsigned integer values. -

A data format

"float32"indicates that items in the depth data buffers obtained from the API are 4-byte floating point values.

The following table summarizes the ways various data formats can be consumed:

| Data format | GLenum value equivalent

| Size of depth buffer entry | Usage on CPU | Usage on GPU |

|---|---|---|---|---|

"luminance-alpha"

| LUMINANCE_ALPHA | 2 bytes | Interpret data as Uint16Array

| Inspect Luminance and Alpha channels to reassemble single value. |

"float32"

| R32F | 4 bytes | Interpret data as Float32Array

| Inspect Red channel and use the value. |

2.3. Session configuration

dictionary {XRDepthStateInit required sequence <XRDepthUsage >;usagePreference required sequence <XRDepthDataFormat >; };dataFormatPreference

The usagePreference is an ordered sequence of XRDepthUsages, used to describe the desired depth sensing usage for the session.

The dataFormatPreference is an ordered sequence of XRDepthDataFormats, used to describe the desired depth sensing data format for the session.

The XRSessionInit dictionary is expanded by adding new depthSensing key. The key is optional in XRSessionInit, but it MUST be provided when depth-sensing is included in either requiredFeatures or optionalFeatures.

partial dictionary XRSessionInit {XRDepthStateInit ; };depthSensing

If the depth sensing feature is a required feature but the application did not supply a depthSensing key, the user agent MUST treat this as an unresolved required feature and reject the requestSession(mode, options) promise with a NotSupportedError. If it was requested as an optional feature, the user agent MUST ignore the feature request and not enable depth sensing on the newly created session.

if the depth sensing feature is a required feature but the result of finding supported configuration combination algorithm invoked with usagePreference and dataFormatPreference is null, the user agent MUST treat this as an unresolved required feature and reject the requestSession(mode, options) promise with a NotSupportedError. If it was requested as an optional feature, the user agent MUST ignore the feature request and not enable depth sensing on the newly created session.

When an XRSession is created with depth sensing enabled, the depthUsage and depthDataFormat attributes MUST be set to the result of finding supported configuration combination algorithm invoked with usagePreference and dataFormatPreference.

-

Let selectedUsage be

null. -

For each usage in usagePreference sequence, perform the following steps:

-

If usage is not considered a supported depth sensing usage by the native depth sensing capabilities of the device, continue to the next entry.

-

Set selectedUsage to usage and abort these nested steps.

-

-

If selectedUsage is

null, returnnulland abort these steps. -

Let selectedDataFormat be

null. -

For each dataFormat in dataFormatPreference, perform the following steps:

-

If selectedUsage,dataFormat is not considered a supported depth sensng usage and data format combination by the native depth sensing capabilities of the device, continue to the next entry.

-

Set selectedDataFormat to dataFormat and abort these nested steps.

-

-

If selectedDataFormat is

null, returnnulland abort these steps. -

Return selectedUsage,selectedDataFormat.

Note: user agents are not required to support all existing combinations of usages and data formats. This is intended to allow them to provide data in an efficient way, and depends on the underlying platforms. This decision places additional burden on the application developers - it could be mitigated by creation of libraries hiding the API complexity, possibly sacrificing performance.

The user agent that is capable of supporting the depth sensing API MUST support at least one XRDepthUsage mode. The user agent that is capable of supporting the depth sensing API MUST support "luminance-alpha" data format, and MAY support other formats.

const session= await navigator. xr. requestSession( "immersive-ar" , { requiredFeatures: [ "depth-sensing" ], depthSensing: { usagePreference: [ "cpu-optimized" , "gpu-optimized" ], dataFormatPreference: [ "luminance-alpha" , "float32" ], }, });

In only one current engine.

OperaNoneEdge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

In only one current engine.

OperaNoneEdge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

partial interface XRSession {readonly attribute XRDepthUsage ;depthUsage readonly attribute XRDepthDataFormat ; };depthDataFormat

The depthUsage describes depth sensing usage with which the session was configured. If this attribute is accessed on a session that does not have depth sensing enabled, the user agent MUST throw an InvalidStateError.

The depthDataFormat describes depth sensing data format with which the session was configured. If this attribute is accessed on a session that does not have depth sensing enabled, the user agent MUST throw an InvalidStateError.

3. Obtaining depth data

3.1. XRDepthInformation

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

XRDepthInformation/normDepthBufferFromNormView

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

XRDepthInformation/rawValueToMeters

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

[SecureContext ,Exposed =Window ]interface {XRDepthInformation readonly attribute unsigned long ;width readonly attribute unsigned long ; [height SameObject ]readonly attribute XRRigidTransform ;normDepthBufferFromNormView readonly attribute float ; };rawValueToMeters

The width attribute contains width of the depth buffer (i.e. number of columns).

The height attribute contains height of the depth buffer (i.e. number of rows).

The normDepthBufferFromNormView attribute contains a XRRigidTransform that needs to be applied when indexing into the depth buffer. The transformation that the matrix represents changes the coordinate system from normalized view coordinates to normalized depth buffer coordinates that can then be scaled by depth buffer’s width and height to obtain the absolute depth buffer coordinates.

Note: if the applications intend to use the resulting depth buffer for texturing a mesh, care must be taken to ensure that the texture coordinates of the mesh vertices are expressed in normalized view coordinates, or that the appropriate coordniate system change is peformed in a shader.

The rawValueToMeters attribute contains the scale factor by which the raw depth values from a depth buffer must be multiplied in order to get the depth in meters.

Each XRDepthInformation has an associated view that stores XRView from which the depth information instance was created.

Each XRDepthInformation has an associated depth buffer that contains depth buffer data. Different XRDepthInformations may store objects of different concrete types in the depth buffer.

When attempting to access the depth buffer of XRDepthInformation or any interface that inherits from it, the user agent MUST run the following steps:

-

Let depthInformation be the instance whose member is accessed.

-

Let view be the depthInformation’s view.

-

Let frame be the view’s frame.

-

If frame is not active, throw

InvalidStateErrorand abort these steps. -

If frame is not an animationFrame, throw

InvalidStateErrorand abort these steps. -

Proceed with normal steps required to access the member of depthInformation.

3.2. XRCPUDepthInformation

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

XRCPUDepthInformation/getDepthInMeters

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

[Exposed =Window ]interface :XRCPUDepthInformation XRDepthInformation { [SameObject ]readonly attribute ArrayBuffer ;data float (getDepthInMeters float ,x float ); };y

The data attribute contains depth buffer information in raw format, suitable for uploading to a WebGL texture if needed. The data is stored in row-major format, without padding, with each entry corresponding to distance from the view's near plane to the users' environment, in unspecified units. The size of each data entry and the type is determined by depthDataFormat. The values can be converted from unspecified units to meters by multiplying them by rawValueToMeters. The normDepthBufferFromNormView can be used to transform from normalized view coordinates into depth buffer’s coordinate system. When accessed, the algorithm to access the depth buffer MUST be run.

Note: Applications SHOULD NOT attempt to change the contents of data array as this can lead to incorrect results returned by the getDepthInMeters(x, y) method.

The getDepthInMeters(x, y) method can be used to obtain depth at coordinates. When invoked, the algorithm to access the depth buffer MUST be run.

When getDepthInMeters(x, y) method is invoked on an XRCPUDepthInformation depthInformation with x, y, the user agent MUST obtain depth at coordinates by running the following steps:

-

Let view be the depthInformation’s view, frame be view’s frame, and session be the frame’s

session. -

If x is greater than

1.0or less than0.0, throwRangeErrorand abort these steps. -

If y is greater than

1.0or less than0.0, throwRangeErrorand abort these steps. -

Let normalizedViewCoordinates be a vector representing 3-dimensional point in space, with

xcoordinate set to x,ycoordinate set to y,zcoordinate set to0.0, andwcoordinate set to1.0. -

Let normalizedDepthCoordinates be a result of premultiplying normalizedViewCoordinates vector from the left by depthInformation’s

normDepthBufferFromNormView. -

Let depthCoordinates be a result of scaling normalizedDepthCoordinates, with

xcoordinate multiplied by depthInformation’swidthandycoordinate multiplied by depthInformation’sheight. -

Let column be the value of depthCoordinates’

xcoordinate, truncated to an integer, and clamped to[0, width-1]integer range. -

Let row be the value of depthCoordinates’

ycoordinate, truncated to an integer, and clamped to[0, height-1]integer range. -

Let index be equal to row multiplied by

width& added to column. -

Let byteIndex be equal to index multiplied by the size of the depth data format.

-

Let rawDepth be equal to a value found at index byteIndex in

data, interpreted as a number accordingly to session’sdepthDataFormat. -

Let rawValueToMeters be equal to depthInformation’s

rawValueToMeters. -

Return rawDepth multiplied by rawValueToMeters.

partial interface XRFrame {XRCPUDepthInformation ?getDepthInformation (XRView ); };view

In only one current engine.

OperaNoneEdge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

The getDepthInformation(view) method, when invoked on an XRFrame, signals that the application wants to obtain CPU depth information relevant for the frame.

When getDepthInformation(view) method is invoked on an XRFrame frame with an XRView view, the user agent MUST obtain CPU depth information by running the following steps:

-

Let session be frame’s

session. -

If depth-sensing feature descriptor is not contained in the session’s XR device's list of enabled features for session’s mode, throw a

NotSupportedErrorand abort these steps. -

If frame’s active boolean is

false, throw anInvalidStateErrorand abort these steps. -

If frame’s animationFrame boolean is

false, throw anInvalidStateErrorand abort these steps. -

If frame does not match view’s frame, throw an

InvalidStateErrorand abort these steps. -

If the session’s

depthUsageis not"cpu-optimized", throw anInvalidStateErrorand abort these steps. -

Let depthInformation be a result of creating a CPU depth information instance given frame and view.

-

Return depthInformation.

In order to create a CPU depth information instance given XRFrame frame and XRView view, the user agent MUST run the following steps:

-

Let result be a new instance of

XRCPUDepthInformation. -

Let time be frame’s time.

-

Let session be frame’s

session. -

Let device be the session’s XR device.

-

Let nativeDepthInformation be a result of querying device for the depth information valid as of time, for specified view, taking into account session’s

depthUsageanddepthDataFormat. -

If nativeDepthInformation is

null, returnnulland abort these steps. -

If the depth buffer present in nativeDepthInformation meets user agent’s criteria to block access to the depth data, return

nulland abort these steps. -

If the depth buffer present in nativeDepthInformation meets user agent’s criteria to limit the amount of information available in depth buffer, adjust the depth buffer accordingly.

-

Initialize result’s

widthto the width of the depth buffer returned in nativeDepthInformation. -

Initialize result’s

heightto the height of the depth buffer returned in nativeDepthInformation. -

Initialize result’s

normDepthBufferFromNormViewto a newXRRigidTransform, based on nativeDepthInformation’s depth coordinates transformation matrix. -

Initialize result’s

datato the raw depth buffer returned in nativeDepthInformation. -

Initialize result’s view to view.

-

Return result.

XRFrameRequestCallback. It is assumed that the session that has depth sensing enabled, with usage set to "cpu-optimized" and data format set to "luminance-alpha":

const session= ...; // Session created with depth sensing enabled. const referenceSpace= ...; // Reference space created from the session. function requestAnimationFrameCallback( t, frame) { session. requestAnimationFrame( requestAnimationFrameCallback); const pose= frame. getViewerPose( referenceSpace); if ( pose) { for ( const viewof pose. views) { const depthInformation= frame. getDepthInformation( view); if ( depthInformation) { useCpuDepthInformation( view, depthInformation); } } } }

Once the XRCPUDepthInformation is obtained, it can be used to discover a distance from the view plane to user’s environment (see § 4 Interpreting the results section for details). The below code demonstrates obtaining the depth at normalized view coordinates of (0.25, 0.75):

function useCpuDepthInformation( view, depthInformation) { const depthInMeters= depthInformation. getDepthInMeters( 0.25 , 0.75 ); console. log( "Depth at normalized view coordinates (0.25, 0.75) is:" , depthInMeters); }

3.3. XRWebGLDepthInformation

XRWebGLDepthInformation/texture

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera MobileNone

[Exposed =Window ]interface :XRWebGLDepthInformation XRDepthInformation { [SameObject ]readonly attribute WebGLTexture ; };texture

The texture attribute contains depth buffer information as an opaque texture. Each texel corresponds to distance from the view's near plane to the users' environment, in unspecified units. The size of each data entry and the type is determined by depthDataFormat. The values can be converted from unspecified units to meters by multiplying them by rawValueToMeters. The normDepthBufferFromNormView can be used to transform from normalized view coordinates into depth buffer’s coordinate system. When accessed, the algorithm to access the depth buffer of XRDepthInformation MUST be run.

XRWebGLBinding/getDepthInformation

In only one current engine.

Opera76+Edge90+

Edge (Legacy)NoneIENone

Firefox for AndroidNoneiOS SafariNoneChrome for Android90+Android WebViewNoneSamsung Internet15.0+Opera Mobile64+

partial interface XRWebGLBinding {XRWebGLDepthInformation ?(getDepthInformation XRView ); };view

The getDepthInformation(view) method, when invoked on an XRWebGLBinding, signals that the application wants to obtain WebGL depth information relevant for the frame.

When getDepthInformation(view) method is invoked on a XRWebGLBinding binding with an XRView view, the user agent MUST obtain WebGL depth information by running the following steps:

-

Let session be binding’s session.

-

Let frame be view’s frame.

-

If session does not match frame’s

session, throw anInvalidStateErrorand abort these steps. -

If depth-sensing feature descriptor is not contained in the session’s XR device's list of enabled features for session’s mode, throw a

NotSupportedErrorand abort these steps. -

If the session’s

depthUsageis not"gpu-optimized", throw anInvalidStateErrorand abort these steps. -

If frame’s active boolean is

false, throw anInvalidStateErrorand abort these steps. -

If frame’s animationFrame boolean is

false, throw anInvalidStateErrorand abort these steps. -

Let depthInformation be a result of creating a WebGL depth information instance given frame and view.

-

Return depthInformation.

In order to create a WebGL depth information instance given XRFrame frame and XRView view, the user agent MUST run the following steps:

-

Let result be a new instance of

XRWebGLDepthInformation. -

Let time be frame’s time.

-

Let session be frame’s

session. -

Let device be the session’s XR device.

-

Let nativeDepthInformation be a result of querying device’s native depth sensing for the depth information valid as of time, for specified view, taking into account session’s

depthUsageanddepthDataFormat. -

If nativeDepthInformation is

null, returnnulland abort these steps. -

If the depth buffer present in nativeDepthInformation meets user agent’s criteria to block access to the depth data, return

nulland abort these steps. -

If the depth buffer present in nativeDepthInformation meets user agent’s criteria to limit the amount of information available in depth buffer, adjust the depth buffer accordingly.

-

Initialize result’s

widthto the width of the depth buffer returned in nativeDepthInformation. -

Initialize result’s

heightto the height of the depth buffer returned in nativeDepthInformation. -

Initialize result’s

normDepthBufferFromNormViewto a newXRRigidTransform, based on nativeDepthInformation’s depth coordinates transformation matrix. -

Initialize result’s

textureto an opaque texture containing the depth buffer returned in nativeDepthInformation. -

Initialize result’s view to view.

-

Return result.

XRFrameRequestCallback. It is assumed that the session that has depth sensing enabled, with usage set to "gpu-optimized" and data format set to "luminance-alpha":

const session= ...; // Session created with depth sensing enabled. const referenceSpace= ...; // Reference space created from the session. const glBinding= ...; // XRWebGLBinding created from the session. function requestAnimationFrameCallback( t, frame) { session. requestAnimationFrame( requestAnimationFrameCallback); const pose= frame. getViewerPose( referenceSpace); if ( pose) { for ( const viewof pose. views) { const depthInformation= glBinding. getDepthInformation( view); if ( depthInformation) { useGpuDepthInformation( view, depthInformation); } } } }

Once the XRWebGLDepthInformation is obtained, it can be used to discover a distance from the view plane to user’s environment (see § 4 Interpreting the results section for details). The below code demonstrates how the data can transferred to the shader:

const gl= ...; // GL context to use. const shaderProgram= ...; // Linked WebGLProgram. const programInfo= { uniformLocations: { depthTexture: gl. getUniformLocation( shaderProgram, 'uDepthTexture' ), uvTransform: gl. getUniformLocation( shaderProgram, 'uUvTransform' ), rawValueToMeters: gl. getUniformLocation( shaderProgram, 'uRawValueToMeters' ), } }; function useGpuDepthInformation( view, depthInformation) { // ... gl. bindTexture( gl. TEXTURE_2D, depthInformation. texture); gl. activeTexture( gl. TEXTURE0); gl. uniform1i( programInfo. uniformLocations. depthTexture, 0 ); gl. uniformMatrix4fv( programInfo. uniformLocations. uvTransform, false , depthData. normDepthBufferFromNormView. matrix); gl. uniform1f( programInfo. uniformLocations. rawValueToMeters, depthData. rawValueToMeters); // ... }

The fragment shader that makes use of the depth buffer can be for example:

precision mediump float ; uniform sampler2D uDepthTexture ; uniform mat4 uUvTransform ; uniform float uRawValueToMeters ; varying vec2 vTexCoord ; float DepthGetMeters ( in sampler2D depth_texture , in vec2 depth_uv ) { // Depth is packed into the luminance and alpha components of its texture. // The texture is a normalized format, storing millimeters. vec2 packedDepth = texture2D ( depth_texture , depth_uv ). ra ; return dot ( packedDepth , vec2 ( 255.0 , 256.0 * 255.0 )) * uRawValueToMeters ; } void main ( void ) { vec2 texCoord = ( uUvTransform * vec4 ( vTexCoord . xy , 0 , 1 )). xy ; float depthInMeters = DepthGetMeters ( uDepthTexture , texCoord ); gl_FragColor= ...; }

4. Interpreting the results

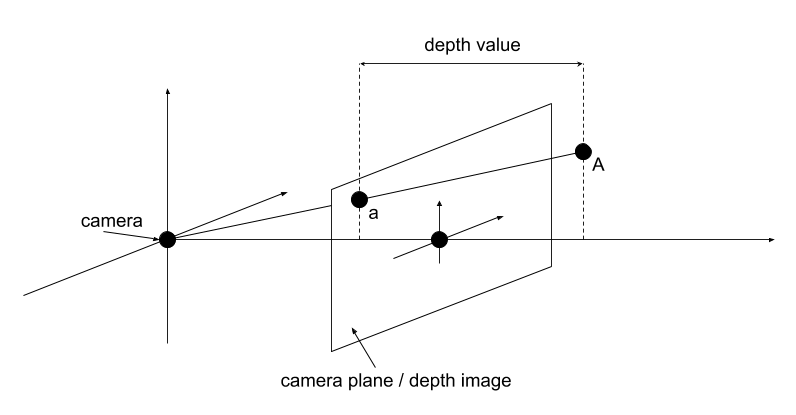

The values stored in data and texture represent distance from the camera plane to the real-world-geometry (as understood by the XR system). In the below example, the depth value at point a = (x, y) corresponds to the distance of point A to the camera plane. Specifically, the depth value does NOT represent the length of aA vector.

The above image corresponds to the following code:

// depthInfo is of type XRCPUDepthInformation: const depthInMeters= depthInfo. getDepthInMeters( x, y);

5. Native device concepts

5.1. Native depth sensing

Depth sensing specification assumes that the native device on top of which the depth sensing API is implemented provides a way to query device’s native depth sensing capabilities. The device is said to support querying device’s native depth sensing capabilities if it exposes a way of obtainig view-aligned depth buffer data. The depth buffer data MUST contain buffer dimensions, the information about the units used for the values stored in the buffer, and a depth coordinates transformation matrix that performs a coordinate system change from normalized view coordinates to normalized depth buffer coordinates. This transform should leave z coordinate of the transformed 3D vector unaffected.

The device can support depth sensing usage in 2 ways. If the device is primarily capable of returning the depth data through CPU-accessible memory, it is said to support "cpu-optimized" usage. If the device is primarily capable of returning the depth data through GPU-accessible memory, it is said to support "gpu-optimized" usage.

Note: The user agent can choose to support both usage modes (e.g. when the device is capable of providing both CPU- and GPU-accessible data, or by performing the transfer between CPU- and GPU-accessible data manually).

The device can support depth sensing data format given depth sensing usage in the following ways. If, given the depth sensing usage, the device is able to return depth data as a buffer containing 2-byte unsigned integers, it is said to support "luminance-alpha" data format. If, given the depth sensing usage, the device is able to return depth data as a buffer containing 4-byte floating point values, it is said to support "float32" data format.

The device is said to support depth sensing usage and data format combination if it supports depth sensing usage and supports depth sensing data format given the specified usage.

Note: the support of depth sensing API is not limited only to hardware classified as AR-capable, although it is expected that the feature will be more common in such devices. VR devices that contain appropriate sensors and/or use other techniques to provide depth buffer should also be able to provide the data necessary to implement depth sensing API.

6. Privacy & Security Considerations

The depth sensing API provides additional information about users' environment to the websites, in the format of depth buffer. Given a depth buffer with sufficiently high resolution, and with sufficiently high precision, the websites could potentially learn more detailed information than the users are comfortable with. Depending on the underlying technology used, the depth data may be created based on camera image and IMU sensors.

In order to mitigate privacy risks to the users, user agents should seek user consent prior to enabling the depth sensing API on a session. In addition, as the depth sensing technologies & hardware improve, the user agents should consider limiting the amount of information exposed through the API, or blocking access to the data returned from the API if it is not feasible to introduce such limitations. To limit the amount the information, the user agents could for example reduce the resolution of the resulting depth buffer, or reduce the precision of values present in the depth buffer (for example by quantization). User agents that decide to limit the amount of data in such way will still be considered as implementing this specification.

In case the user agent is capable of providing depth buffers that are detailed enough that they become equivalent to information provided by the device’s cameras, it must first obtain user consent that is equivalent to consent needed to obtain camera access.

Changes

Changes from the First Public Working Draft 31 August 2021

7. Acknowledgements

The following individuals have contributed to the design of the WebXR Depth Sensing specification: