Transcript

I'm pretty nervous about, I'm going to talk in English in such a fantastic place. Anyway I'm going to talk as representation of the Japanese developers. So let's get started.

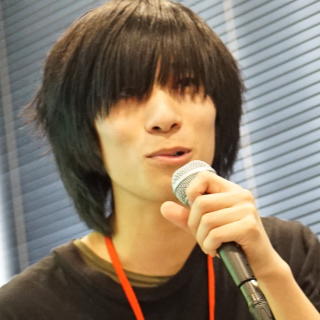

Hi, I'm Yutaka. I'm doing engineering at a company called Pixiv.

I'm working on a 3D character platform that's called VRoid Hub.

I'm also a demoscener, doing WebGL stuff as my hobby.

So today I am going to talk about implementation of 3D character viewer on Web:

- Firstly I'll talk about VRM, a format for 3D avatar.

- Secondly, I'm going to talk about its implementation on web.

- Lastly some issues we encountered during our development.

So first let me talk about our web service called VRoid Hub.

In context nowadays it becomes really easier to make your own 3D characters. Then VRoid Hub aims to be hub of 3D characters. So, on the Web, and we must present 3D character in web browsers, utilizing their full potential.

So, we are using a 3D character format called VRM. VRM is pretty good at describing 3D characters.

Actually VRM is an extension of glTF. glTF is a format for 3D models. It's proposed by the Khronos Group.

VRM has so many features to deal with humanoid avatars.

For example, you can refer a character's specific bone with ease, like hips or legs, or whatever. Or you can see it's metafields that describes its presence or conditions : for example, can you use this avatar as your own avatar, or not?

However, most of these specs are meant to be used by modern game engines like Unity, or Unreal Engine, or... So, we had to adopt these specs into how we treat 3D stuff on the Web.

Also, Pixiv is one of the communities of an online organization called VRM Consortium. So, we are discussing the specification of the format VRM.

Well, so, let's go on to the talk about implementation.

We implemented VRM input as a module of Three.js. Three.js already has glTF implementation, so we only had to implement VRM-specific features.

We released our implementation on GitHub early last week, so if you are interested to the VRM, please check this, especially.

So, I'm going to talk especially about implementation of materials. In VRM we can use a material called MToon. MToon is basically a toon material, but it has so many features.

Here's a comparison of simple material and MToon. As you can see, you can notice that MToon is way more expressive.

So, in 3DCG world, we describe how materials looks like using a program called shader. Here is a simple example of a shader program. It's just a simply output into color.

Or this, as this example says, do a texture mapping.

So, since shaders are described as a program, it lacks portability.

We need well-described material specification to implement a material for various platform or context.

In glTF interface, called PBR material is used and it's very common, since it's based on academic researches. PBR stands for physically-based rendering, so it's basically really good at express photorealistic materials.

However, the specification of MToon is completely different from PBR materials, since it's toon-like material. And thirdly, we don't have any documentation for implementing MToon. They're just, actually implementation of Unity. So, we have to convert, actually, Unity implementation into Three.js.

So, as I said before, MToon has so many features. Yeah, and then we have to write pretty long shader code. It was really painful time.

Yeah, this is just my opinion, but I think it should be nodable. In these days, most of 3DCG software have node-based material editor. Three.js also has NodeMaterial.

They are still at experimental stage, though, so if we can describe the specification of our MToon material as a portable node notation, that would be great.

Lastly, I am going to talk about some issues we encountered during the development we already have of our service.

VRoid hub is meant to be visited from both PC and mobile devices, so we have to make our experience tolerable even on mobile devices. But, as you can see, outline looks pretty grisly without antialias. Antialias is the technology to perceptually remove the aliases.

We cannot use multisample antialias on WebGL. We can use it in WebGL2, though, but WebGL, very few devices supports the WebGL2.

So we are entering our entire thing on double-resolution, plus doing post-processing antialias too. It's pretty expensive on mobile devices.

So, we wanted to implement quality control on our renderer. We want to render things in higher quality on high-end devices, but not on low-end ones. And we are doing web application, then we don't want to let user tweak setting window-like things.

We wanted to do adaptive quality control. But inspecting one's processing power on the end is pretty hard. There is one option.

That's the actual frames-per-second, but FPS can be subject of various condition that is purely not device power. Plus, there are two kinds of 60FPS in this world: on one end, one renders our stuff with ease but the other is using it's maximum power.

So we wanted to measure the processing time, actual processing time, but we cannot see the GPU time. However there is a WebGL extension. Disjoint measures the GPU time. But it's still well not supported, especially on mobile devices. So we are currently falling into seeing FPS on the end, at a very low threshold.

We also are encountering security issues. It's relatively harder than native applications to protect users' creation on Web. We have to protect users' creations at all cost.

In VRoid hub, they are users who sell those textures on their shop, so we even want to protect online textures.

And in context we are currently using binary format. Plus, it's encrypted, so it's hard to crack some of the data. And more context, we have to take these steps to show 3D models with texture.

First, fetch the binary and get the image chunks, then send the image to GPU.

As I said, we don't want the user's texture being yoinked, but it gets exposed when you just open the browser DevTools if you use HTML img element. So, we are preventing this by decoding the texture by ourselves.

By the way, WebCodecs API has been proposed several months ago. It will be nice to decode audio and video. And also, I think it will be nice if we can have the equivalent of this API for the images.

And that's the end of my talk.

Thank you.