Warning:

This wiki has been archived and is now read-only.

Foundational Layer

Contents

- 1 The Stimulus-Sensor-Observation Ontology Design Pattern and its Integration into the Semantic Sensor Network Ontology

The Stimulus-Sensor-Observation Ontology Design Pattern and its Integration into the Semantic Sensor Network Ontology

Intermediate version of SSN XG Report with content aligned with the following paper

Janowicz, K. and Compton, M. (2010): The Stimulus-Sensor-Observation Ontology Design Pattern and its Integration into the Semantic Sensor Network Ontology. In Kerry Taylor, Arun Ayyagari, David De Roure (Eds.): The 3rd International workshop on Semantic Sensor Networks 2010 (SSN10) in conjunction with the 9th International Semantic Web Conference (ISWC 2010), 7-11 November 2010, Shanghai,China. 2010.

Superseded by SSN Skeleton

Skeleton: the core pattern for Sensor Ontologies

Introduction

Skeleton module

- Skeleton module

- Links between SSN and DUL (classes and properties)

- Documentation for the DUL classes and properties used by the SSN ontology (extracted from dul.owl)

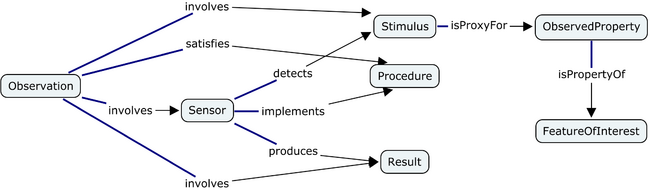

This section introduces a skeleton acting as basis for sensor ontologies and the integration into linked sensor data. The skeleton is designed based on the principle of minimal ontological commitments and is not aligned to any other top-level ontology. It introduces a minimal set of classes and relations centered around the notions of stimuli, sensor, and observations. Based on the work of Quine, the skeleton defines stimuli as the (only) link to the physical environment. Empirical science observes these stimuli using sensors to infer information about environmental properties and construct features of interest. The sensors themselves may have particular properties, such as an accuracy in certain conditions, or may be deployed to observe a particular feature, and thus the whole SSN ontology unfolds around this central pattern, which at its heart relates what a sensor detects to what it observes. While this section outlines and discusses the skeleton, later sections describe the alignment of the skeleton to the DOLCE foundational ontology and the development of the SSN ontology based on this alignment; see section 4.2.2 for an overview.

The relation between the three ontologies is best thought of as layers or modules. The first skeleton (also refereed to as ontology design pattern pattern) represents the initial conceptualization as a lightweight, minimalistic, and flexible ontology with a minimum of ontological commitments. While this pattern can already be used as vocabulary for some use cases, other application areas require a more rigid conceptualization to support semantic interoperability. Therefore, we introduce a realization of the pattern based on the classes and relations provided by DOLCE Ultra Light. This ontology can be either directly used, e.g., for Linked Sensor Data, or integrated into more complex ontologies as a common ground for alignment, matching, translation, or interoperability in general. For this purpose, we demonstrate how the pattern was integrated as top-level of the SSN ontology. Note that for this reason, new classes and relations are introduced based on subsumption and equivalence. For instance, the first pattern uses the generic involves relation, while the DOLCE-aligned version distinguishes between events and objects and, hence, uses DUL:includesEvent and DUL:includesObject, respectively.

The Stimulus-Sensor-Observation Ontology Design Pattern

The Stimulus-Sensor-Observation Ontology Design Pattern acts as the upper core-level of the Semantic Sensor Network ontology. While the pattern is an integral part of the SSN ontology, it is also indented to be used separately as a common design pattern for all kind of sensor or observation based ontologies and vocabularies for the Semantic Sensor Web and especially Linked Data. The pattern is developed following the principle of minimal ontological commitments to make it reusable for a variety of application areas. To ease the interpretation of the used primitives as well as to boost ontology alignment and matching, the pattern is aligned to the ultra light version of the DOLCE foundational ontology, see Foundational_Layer for details. The names of classes and relations have been selected based on a review of existing sensor and observation ontologies [Compton et al. 2009a].

Stimuli

Stimuli are detectable changes in the environment, i.e., in the physical world. They are the starting point of each measurement as they act as triggers for sensors. Stimuli can either be directly or indirectly related to observable properties and, therefore, to features of interest. They can also be actively produced by a sensor to perform observations. The same types of stimulus can trigger different kinds of sensors and be used to reason about different properties. Nevertheless, a stimulus may only be usable as proxy for a specific region of an observed property.

Sensors

Sensors are physical objects that perform observations, i.e., they transform an incoming stimulus into another, often digital, representation. Sensors are not restricted to technical devices but also include humans as observers. A clear distinction needs to be drawn between sensors as objects and the process of sensing. We assume that objects are sensors while they perform sensing, i.e., while they are deployed. Furthermore, we also distinguish between the sensor and a procedure, i.e., a description, which defines how a sensor should be realized and deployed to measure a certain observable property. Similarly, to the capabilities of particular stimuli, sensors can only operate in certain conditions. These characteristics are modeled as observable properties of the sensors and includes their survival range or accuracy of measurement under defined external conditions. Finally, sensors can be combined to sensor systems and networks. Many sensors need to keep track of time and location to produce meaningful results and, hence, are combined with further sensors to sensor systems such as weather stations.

Observations

Observations act as the nexus between incoming stimuli, the sensor, and the output of the sensor, i.e., a symbol representing a region in a dimensional space. Therefore, we regard observations as social, not physical, object. Observations can also fix other parameters such as time and location. These can be specified as parts of observation procedure. The same sensor can be positioned in different ways and, hence, collect data about different properties. In many cases, sensors perform additional processing steps or produce single results based on a series of incoming stimuli. Therefore, observations are rather contexts for the interpretation of the incoming stimuli than physical events.

Observed Properties

Properties are qualities that can be observed via stimuli by a certain type of sensors. They inhere in features of interest and do not exist independently. While this does not imply that they do not exist without observations, our domain is restricted to those observations for which sensors can be implemented based on certain procedures and stimuli. To minimize the amount of ontological commitments related to the existence of entities in the physical world, observed properties are the only connection between stimuli, sensors, and observations on the one hand, and features of interests on the other hand.

Features of Interest

Features of Interest are entities in the real world that are the target of sensing. As entities are reifications, the decision of how to carve out fields of sensory input to form such features is arbitrary to a certain degree and, therefore, has to be fixed by the observation (procedure).

Sensing Method

Sensing is a description of how a sensor works, i.e., how a certain type of stimuli is transformed to a digital representation, perhaps a description of the scientific method behind the sensor. Consequently, sensors can be thought of as implementations of sensing methods where different methods can be used to derive information about the same type of observed property. Sensing methods can also be used to describe how observations where made: e.g., how a sensor was positioned and used. Simplifying, one can think of sensing as recipes for observing.

Result

The result is a symbol representing a value as outcome of the observation. Results can act as stimuli for other sensors and can range from counts and Booleans, to images, or binary data in general

Aligning the SSN Ontology and the core Pattern with DOLCE

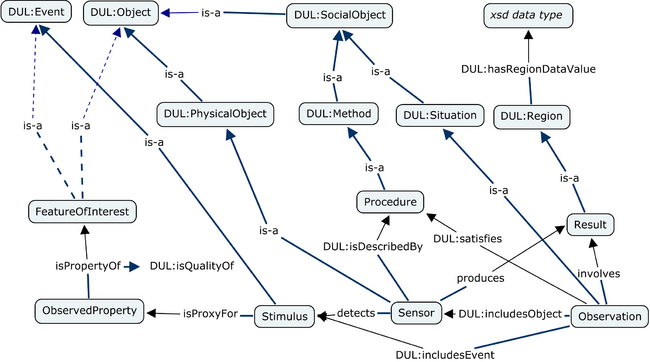

([Image] also showing DOLCE Ultra Lite alignment.)

The Stimulus-Sensor-Observation Ontology Design Pattern is intended as a minimal vocabulary for the Semantic Sensor Web and Linked Data in general. While it introduces the key classes and their relations for the Semantic Sensor Network ontology, the terms used for their specification remain undefined. The advantage of such an underspecified ontology pattern is its flexibility and the possibility to integrate and reuse it in various applications by introducing subclasses. The downside, however, is a lack of explicit ontological commitments and, therefore, reduced semantic interoperability. To restrict the interpretation of the introduced classes and relations towards the intended model, the SSO pattern and the SSN ontology are aligned to the DOLCE Ultra Light (DUL) foundational ontology.

Each SSO class is defined as a subclass of an existing DUL class and related to other SSO and DUL classes. New types of relations are only introduced when the domain or range have to be changed, in all other cases the relations from DUL are reused. The resulting extension to DUL preserves all ontological commitments discussed for the SSO pattern.

Stimuli

The SSO class Stimulus can be either defined as a subclass of DUL:Event or its immediate subclasses Action and Process. In contrast to processes, actions require at least one agent as participant and, therefore, would be too restrictive for the design pattern. The classifications of events in DUL is work in progress. For instance, there is nothing said about how processes differ from other kinds of events. Therefore, the pattern defines a Stimulus as a subclass of DUL:Event. As a consequence, stimuli need at least one DUL:Object as participant.

Sensors

Sensors are defined as subclasses of physical objects (DUL:PhysicalObject). Therefore, they have to participate in at least one DUL:Event such as their deployment. This is comparable to the ontological distinction between a human and a human's life. Sensors are related to procedures and observations using the DUL:isDescribedBy and DUL:isObjectIncludedIn relations, respectively.

<owl:Class rdf:about="http://purl.oclc.org/NET/ssnx/ssn#Sensor"> <rdfs:label>Sensor</rdfs:label> ... <rdfs:subClassOf rdf:resource="http://www.loa-cnr.it/ontologies/DUL.owl#PhysicalObject"/> ... </owl:Class>

As an example, the code snippet shows how sensors are defined as physical objects in terms of DOLCE.

Observations

The class Observation is specified as a subclass of DUL:Situation, which in turn is a subclass of DUL:SocialObject. The required relation to stimuli, sensors, and results can be modeled using the DUL:includesEvent, DUL:includesObject, and DUL:isSetting relationship, respectively. Observation procedures can be integrated by DUL:satisfies. The decision to model observations as situations conforms with the observation pattern developed by Blomqvist.

Observed Properties

ObservedProperty is defined as a subclass of DUL:Quality. Types of properties, such as temperature or pressure should be added as subclasses of ObservedProperty instead of individuals. A new relation called SSO:isPropertyOf is defined as a subrelation of DUL:isQualityOf to relate a property to a feature of interest.

Features of Interest

Features of interest can be events or objects but not qualities and abstracts to avoid complex questions such as whether there are qualities of qualities. The need to introduce properties for qualities is an artifact of reification and confuses qualities with features or observations. For instance, accuracy is not a property of a temperature but the property of a sensor or an observation procedure.

Procedure

Procedure is defined as a subclass of DUL:Method which in turn is a subclass of DUL:Description. Consequently, procedures are expressed by some DUL:InformationObject such as a manual or scientific paper.

Result

The SSO Result class is modeled as a subclass of DUL:Region. A concrete data value is introduced using the data property DUL:hasRegionDataValue in conjunction with some xsd data type.