General considerations for the Web of Things

Whilst it is unlikely that there will be one architecture that suits all purposes, it is worth considering some of the ideas that have emerged so far. The following introduces some of the challenges, and readers are advised to look at related work for more details.

Services

Services provide general building blocks for the Web of Things.

- Services as a source of data, e.g. when they are bound to sensors

- Services as a sink of data, e.g. when they are bound to actuators

- Services may process data obtained from other services

In general, a service could be any combination of the above roles. An example is a service for a camera where you can command the camera to pan and zoom, to enable/disable the flash, and to take a photo. In this case, the service takes commands as input and generates photo's as output. Another service could take photos as its input and generate a list of tags for the people present in each photo.

Services can provide zero or more data sources, and zero or more data sinks, but at least one source or sink is required. For services bound to actuators, the sink could be for commands or data, e.g. a service for a digital picture frame that allows you to push pictures to the frame.

For scalability, the services are likely to run on Internet platforms, whilst the sensors and actuators are at the edge of the network. Web protocols such as HTTP can be used to connect services to the sensors and actuators, but complications arise as many devices will be behind security firewalls, and may require a bridge between the Web protocols and the communications technologies supported by devices.

Services can process data to alter the level of abstraction, e.g. taking an audio stream of spoken speech as input and generating a sequence of words as output, i.e. more abstract. In the reverse, the output (an audio stream) is more concrete than the input (a sequence of words). A further possibility is to transform data at the same level of abstraction, e.g. changing the units of measure.

In an open market of services, some companies could develop and market services for others to purchase and install. An example is a service for using web cams for home surveillance, where the user purchases a camera from one supplier and the service software from another. It is necessary to distinguish the definition of a service from its instantiation for a particular purpose.

Applications and Services

An application is something that end users can directly interact with. For Web applications, this is via a Web browser. Applications can make use of services, for example, you could have a web page that shows the predicted time for someone to commute to work based upon services that sense traffic speeds.

Service Definitions

Services are essentially a mix of metadata describing the service, code that defines the service's behaviour, and data that the service consumes or produces. The metadata includes such things as who owns the service, the type definitions of the APIs exposed by the service, access control policies, and so forth. The code could be written in a variety of languages according to what the platform supports, e.g. Java, JavaScript or Python.

Protocols or Objects

A common approach is to host services on servers and to provide access to the service via a protocol like HTTP. This allows for a scalable distributed architecture. However, developers will in many cases prefer to avoid having to explicitly work with HTTP. A natural alternative is to expose the service as interfaces on an object that acts as a proxy for the service. You can then access a service with a simple method call, rather than having to set up an HTTP connection, prepare the request headers, open a TCP/IP connection to send the request and so forth.

The code that implements these proxy objects could be automatically generated from the service description. The proxy objects can be used in web applications, or in services composed from other services. For a Web application the proxy objects can be provided as part of a JavaScript library. For services that run in the cloud, the platform for the Web of Things could automatically expose the proxy objects to the scripts implementing the service's behaviour, based upon the type declarations given in the service description. In other words, when defining the service, you declare what other services you want to use, and the platform provides a means to bind to these services when the service starts running. Your code can then access the properties and methods on the proxy objects.

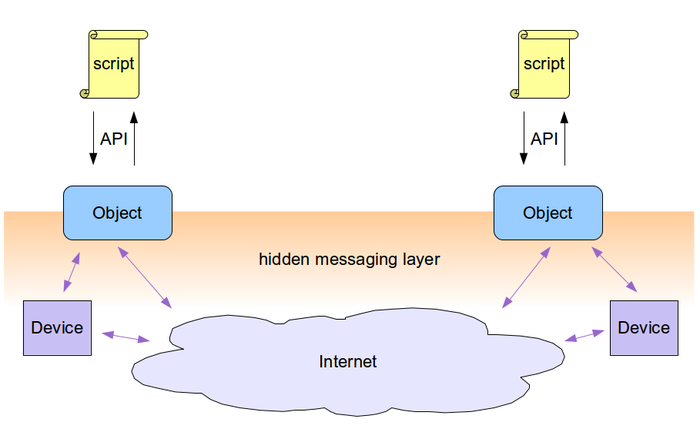

This illustrated by the following diagram:

The scripts here are either part of a web application or part of a cloud based service. The hidden messaging layer decouples scripts from the details of how devices or other services are accessed. A convenient implementation technology for services using JavaScript is node.js.

A multiplicity of evolving communication technologies

There are many different technologies for connecting devices, and the list keeps changing as new technologies are introduced. This makes it desirable to have an architecture that hides details that are best dealt with at a lower level.

Battery operated devices

For sensors and actuators that are battery operated, the battery life is an important consideration. Protocols like ZigBee prolong battery life by only powering up the communications system at agreed intervals. This approach can be used for sensor networks, where each devices cooperate to pass data between them. Gateway devices serve as a bridge to the Internet.

Versioning of APIs

The lifetime of devices will vary considerably according to the kind of device and the context in which they are used. This makes it inevitable that services will need to cope with different versions of APIs exposed by the devices. How can developers create applications and services that work with yesterday's devices, today's devices and tomorrow's devices? This isn't just about device APIs as change in services is also inevitable in a large and dynamic ecosystem . In general, this is a hard problem to address.

On the Web, developer's have found ways to test for the existence of a given API by testing for the presence of a named property, or by attempting to invoke a method and catching the exception that is raised if the API is unsupported. This approach can fail if the APIs evolve in ways that old code failed to anticipate.

Another approach is to associate each version of an API with an unique identifier, and to provide a means to query what versions are available, and a means to bind to a specific version of the API. This is the approach taken in Microsoft's COM -- the object model upon which ActiveX and OLE (Object Linking and Embedding) is based. For an introduction, see:

Versioning is related to the challenge of adapting to variations in device capabilities. In principle, a device (or service) could be described with machine interpretable metadata. In practice there is a risk that the metadata doesn't provide an accurate account. Developers may apply caution by directly probing to see if a given capability is present rather than relying on the metadata.

Scaling issues

Imagine a device exposes a service via HTTP, allowing other devices to connect to it over a network connection. If the service becomes very popular the embedded server will have difficulty in handling the volume of connection requests. This problem can be avoided through the use of proxy servers which can be scaled to match the load.

More generally, services can be cloud-based. That is to say, the service definitions are registered with a cloud-based platform that provides for scalable run-time execution of services. You can have a federated architecture with services running on servers operated by different vendors. This relies on open standards as a basis for interoperability.

Security firewalls

Sensors and actuators will often be placed behind security firewalls to prevent unauthorized access. A common example is provided by home gateways that block incoming connections from outside of the local network, and which serve as network address translators (NAT). If you want to establish peer to peer connections for devices hidden behind their own firewalls, a third party may be essential. APIs for configuring firewalls present a high security risk.

Related standards include support for establishing tunnels through NATs:

- STUN (Session Traversal Utilities for NAT) Wikipedia article

- RFC 5389 which defines the STUN protcol

Policy frameworks

Policies are needed to control access to devices, and to safeguard personal privacy. Policies determine who can access a service for what purposes in which contexts. Sticky policies remain associated with the data to which they apply, so that the policies can still be enforced when the data is moved from one location to another. Policies can be expressed as rules which can be applied dynamically when needed. Another approach is to provide capability tokens that a would be user of a service has to present to gain access. There may be a need to provide audit trails as a means to support accountability. In some use cases, there is a need to be able to override policies when the appropriate situation arises, e.g. in an emergency and under the direction of police or fire officials.

XACML

The extensible access control markup language (XACML) is a declarative XML format for access control rules that has been standardized by OASIS. XACML enables a separation of the access decision from the point of use.

The EU PrimeLife project focused on privacy, and explored extensions to XACML to cover data handling requirements. Privacy policies specify the purposes that personal data can be used for, and for how long personal data can be retained.

OAuth

A widespread example of capability tokens on the Web is the OAuth framework for secure authorization. It allows a user of a website to grant that website with temporary and limited access to personal resources on another server, and avoids the need to share user passwords across servers. The "session fixation" security flaw in OAuth 1.0 (RFC 5849) led to the development of OAuth 2.0 (RFC 6847) which relies on SSL for confidentiality and server authentication. OAuth 2.0 itself is considered to have weak security, in part due to implementation issues around URL redirection. More details are given in a post by Egor Homakov.

- Wikipedia article on OAuth

- Oauth.net

- RFC 5849 -- The OAuth 1.0 Protocol

- RFC6749 -- The OAuth 2.0 Authorization Framework

- Egor Homakov: OAuth1, OAuth2, OAuth...?

Conflicts of interest

Data should not be made available where there is a known conflict of interest, e.g. where a breach would give an unfair commercial advantage. To prevent this from happening, the data needs to be tagged in such a way that the policy engine can detect that a given usage request would result in a conflict of interest. The engine needs to be able to track provenance across service compositions, and to reason over organizational structure and roles. This can be based upon machine interpretable descriptions.

Static code analysis techniques may be feasible in some cases to verifying adherence to conflict of interest policies. Note that this breaks down for applications and services that are not under the control of the policy engine. Daniel Hedin and Andrei Sabelfeld have carried out an analysis of Information-Flow Security for a Core of JavaScript (April 2012).

Faults and Deceits

With any really large system the risk of components failing increases to the point where there is a need to function in the presence of faults. How are faults detected? What are the workarounds? How are the implications of faults communicated to end-users? Enemies could attack a system, introducing false data, and causing subsystems to fail. Are there techniques that can be used to monitor operation and trigger alarms when evidence of attacks are discovered? Is it possible to shutdown corrupted subsystems and provide workarounds using components that are still deemed to be healthy? One potentially useful metaphor is the human body's immune system.

Personal Zones

Users are likely to purchase devices, applications and services from many different vendors. This makes it desirable to provide users with a means to manage their personal devices, applications and services in a way that works seamlessly across vendors. The Webinos project has explored this in terms of a Personal Zone in which each of your devices include an embedded agent (the Personal Zone Proxy) that integrates the device into the zone. Trusted web applications have access to a suite of APIs exposed by the zone. This includes the means to discover and bind to services on devices within the zone. The Personal Zone Hub exposes the zone on the Internet, enabling inter-zone applications and services, and in principle, putting you back in control over your personal data. Webinos also supports Internet of Things devices, and these are required to embed a Personal Zone Proxy, or be accessed via a device that does. Webinos demos include using a phone to control a TV, home automation and home healthcare.

Proprietary marketplaces like Google Play approach some of these needs for personal devices running the Android operating system. When you sign into the Google Play website you can see which of your registered devices are compatible with apps available from the marketplace. However, users are already concerned with the amount of personal information held by Google, and this together with Google's proprietary focus on Android is likely to limit Google extending its scope to cover devices that aren't running Android.

Browser sync is another step towards the evolution of Personal Zones. Browser vendors like Mozilla, allow users to sync personal data across all of their devices with the Firefox browser. This currently covers bookmarks, history, passwords and open tabs. Synchronization is performed via the cloud, but the data is held in an encrypted form, and the key never leaves the browser. The more general capabilities of Personal Zones include services that run on behalf of the user and independently of browsers. Effective security (and privacy) will depend on defense in depth. This points to the need for further work on distributed authorization frameworks where security breaches will be limited in the damage they can cause.

Perception, Actuation and Coordination

We can learn a lot by studying how animals (and humans) deal with perception and actuation. An example is how we understand spoken utterances using a pipelined process where one stage feeds into another: sound waves, phones, phonemes, words, sentences, resolution of references, and cognition. This involves making inferences at one level based upon uncertain data provided at a lower level and taking the context into account. Such processes are also associated with learning mechanisms that allow us to learn new words and their meaning. For the Web of Things, this corresponds to service compositions, where one service builds on top of others, creating progressively higher level interpretations of data. Learning processes corresponding to data mining algorithms, and may operate over very large amounts of data (Big Data).

A similar analogy applies to actuation, where a high level intent is progressively transformed into lower level commands for actuators, often involving careful coordination across many actuators. The act of speaking is a perfect example, where your thoughts are translated into commands for driving a large set of muscles. Some react quickly, e.g. the tip of your tongue, while others like those positioning your jaw take much longer and need to be set into motion further in advance. Actuators could expose APIs for invoking commands, or for uploading scripts for local execution. The actuation pipeline corresponds to a composition of services which accept higher level commands and map them into lower level ones.

Note: one approach to coordination is to determine relative clock skews for actuators, and to delay actuation to ensure that all of the actuators involved are driven in synchrony. Accuracies of ten milliseconds have been achieved in practice using Web technologies such as Web Sockets.

Coordination involves a timeline. This is about agreements on how variables change with time. This includes transitions where a variable follows some function over time that the device can compute, e.g. a linear or spline function. The rendered frame rate will depend on the speed of the device. A related concept is time warping, where time is sped up, or slowed down.

Some related standards include: