Warning:

This wiki has been archived and is now read-only.

TestSuite

In general: Test Suite should NOT define performance or conformance tests.

### Example of Test Suites:

http://www.w3.org/2008/webapps/ElementTraversal/index.html

### Collected requirements:

- The tests suite should address all features, at least with one test. Tests addressing the mixture of features would be also good (e.g., combining a getParam with a filtering on it). [1]

- Test suite should embed positive and negative tests (e.g. getCreator -> what happens if there are duplicate entries, what happens if there is none?). [1]

- Test suite should define the expected behaviour of the API on specific test data (important to reproduce the tests) [1] (e.g., What happends with broken metadata?)

- Test suite should take into scope, how the metadata will be extracted from different sources [1] (e.g. You Tupe clip: has metadata on server, but might also have metadata references on other servers.)

- Test suite should run automatic and enclose all things in a specification tagged with MUST [1]

- Test suite should be able to execute on the server and client side [1]

- A single test in the test suite should be atomic [3]

- Each test must be passed by two implementations in order to pass to CR [3]

### IDEAS:

- Error codes / Error handling [1] (yet exceptions for the methods have been defined [2]) Florian thinks, we should define "error-gorups" (e.g., connection errors or metadata based errors)...after that we should come up with specific errors

- Defining the test suite with RefTest (WebApps Example) [1]

- Test Suite should only take 2 or 3 metadata formats into account [1]

- Setting up components to extract metadata from several sources [1]

### Links:

[1] http://www.w3.org/2009/11/06-mediaann-minutes.html#item11 (TPAC2009 - Day 2)

[2] http://www.w3.org/2009/11/24-mediaann-minutes.html

[3] http://www.w3.org/2009/12/08-mediaann-minutes.html

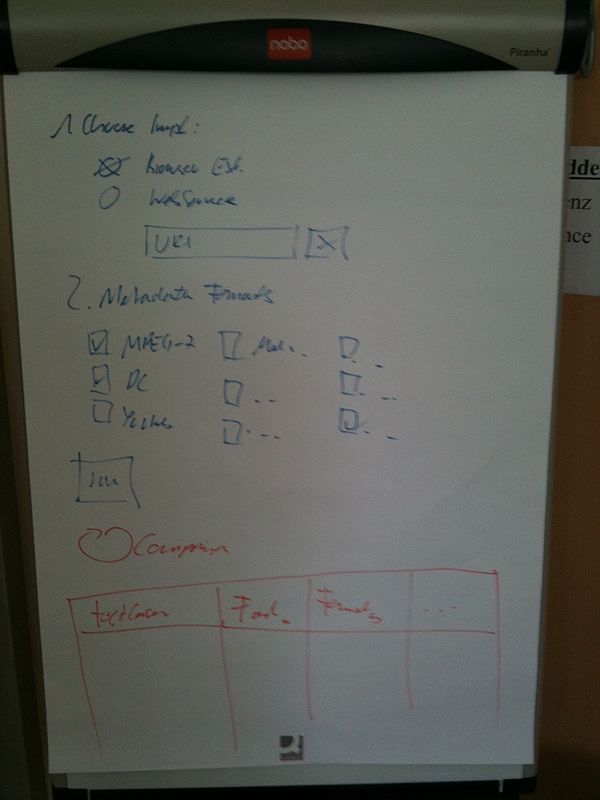

### flip chart drawings from Vienna F2F

### flip chart drawings from Graz F2F