Workshop Report

W3C organized a workshop on Video on the Web in December 2007, hosted by Cisco Systems, in order to share current experiences and examine the technologies. 42 position papers were submitted for the Workshop and 37 organizations attended the event from a wide range of applications: content producers, network companies, research institutes, hardware vendors, video platforms, browser vendors, users, etc. The meeting was hosted in San Jose, California and Brussels, Belgium, with both locations linked with high definition video.

Table of Contents

1. Summary

Online video content and demand is increasing rapidly and the trend will continue for at least a few years. Workshop participants gathered to discuss and to share current experiences and to examine the technologies. Some of the key issues participants discussed related to:

- Metadata: searching and discovering video is difficult with the volume of online video. Having data about data, whether those are automatically generated or manually added, is important, especially when considering the value added by metadata provided by users.

- Video codec: several W3C Working Groups are struggling with the issue of recommending a baseline video codec for the World Wide Web. W3C should continue to investigate the existing video codecs.

- Addressing: in order to make video a first-class object on the World Wide Web, one should be able to identify spatial and temporal clips. Having global identifiers for clips would allow substantial benefits, including in linking, bookmarking, caching and indexing. None of the existing solutions is fully satisfactory or provides a unique resource identifier for clips.

- Content protection: many content producers feel that the success of video online is tied to the ability to manage digital rights associated with the media. While W3C will not investigate the issue of enforcement, it should look into metadata for digital rights.

The Workshop was informative and energetic, and it is anticipated that the W3C Team will work with the Membership to propose a new W3C Activity to continue studying video on the Web, including, for example, Guidelines for publishing and deploying online video.

2. Introduction

Online video has become omnipresent on the Web in the recent years. YouTube, launched in 2005, represented 4% of traffic on high-Internet lines on the Comcast network by the end of 2006. Online video appeals to the Web audience as it lets people go beyond the capabilities of traditional television: more people can distribute video ("the long tail") and social networking allows others in the community to comment, and even reply with other video. The number of user generated video uploads per day is expected to go from 500,000 in 2007 to 4,800,000 in 2011 (Transcoding Internet and Mobile Video: Solutions for the Long Tail, IDC, September 2007). The demand for online video content also keeps increasing. Recent studies indicate that the number of U.S. internet users who have visited a video-sharing site increased by 45% in 2007, and the daily traffic to such sites has doubled (PEW Internet Project Data Memo, PEW/Internet, January 2008). Expectations are quickly evolving to the point where people want videos to publish and view at any time and from any device. These rapid changes are posing challenges to the underlying technologies and standards that support the platform-independent creation, authoring, encoding/decoding, and description of video. To ensure the success of video as a "first class citizen" of the Web, the community needs to build a solid architectural foundation that enables people to create, navigate, search, and distribute video, and to manage digital rights.

W3C has been involved in the area of Video on the Web since 1996, and organized two workshops around this in the past: Real Time Multimedia and the Web (October 1996) and Television and the Web (June 1998). SMIL (Synchronized Multimedia Integration Language), first published in June 1998, provides support for embedding audio and video content. W3C continues to work on the integration of video with other media, synchronized video content in graphics with SVG (Scalable Vector Graphics), and direct support for video and audio in Web browsers with the proposal for a video element in HTML 5.

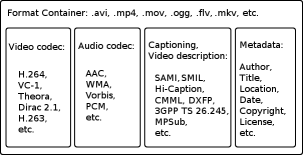

3. Metadata

Workshop participants expressed great interest on the subject of metadata for video and audio content. This was the topic that generated the most discussion and interest at the Workshop, with use cases coming from several perspectives. The major use cases for adding metadata were content labeling, search, and discovery. Anticipating the increase in online video and audio in the upcoming years, participants observed that it will become proportionally more difficult for viewers to find the content. It was noted that metadata comes from several places: content creator, publisher, third-party, or users. Some of the metadata will be automatically produced (face, text or object recognition) and others will be manually added. Some of the metadata will be part of the content (in the format container) or external to the content. Adding user-generated metadata can increase tremendously the value of the content. Of course, the quality of such metadata will highly vary, especially in non-trusted environments. There was a wide range of interest for adding specific metadata: title, author, year, cast, city, digital rights, ratings, tagging, etc. It was pointed out that multiple video formats can be available for the same video, at different data rates. Also, the availability of the metadata will depend on the delivery chain and the player used. For example, transcoding often means that the metadata will get dropped.

Several more or less complex solutions exist in this domain: MPEG 7, SMIL, iTunes XML, Yahoo! MediaRSS, CableLabs VOD Metadata Content, etc. Not many online video distributors are making use of metadata or facilitating the use of by others, either in the professional world or in the world of user generated video. Despite the general interest in metadata, no clear direction came from the workshop. Given its Semantic Web activity, the W3C should continue to investigate in this area, while taking into account the existing solutions.

4. Video codec for the Web

The issue of video codec has been around for a few years — for example,

the SMIL 3 specification attempts to recommend a baseline video codec based on SMIL 1 and 2

experience — but surfaced more preeminently in relation to HTML 5. The HTML 5 Working Draft, which includes a

proposal for embedding and controlling audio and video content,

has attracted a lot of attention on the choice of video codec. The HTML

Working Group seems inclined to recommend a baseline video codec, there is

not yet consensus on which codec to recommend. The Group has elaborated a

number of requirements, such as compatibility with the open source

development model

or no additional submarine patent risk

(see

section 3.14.7.1, Video and audio

codecs for video elements, HTML 5), some of which may be

incompatible.

Several existing video codecs were discussed by the Workshop participants. H.264 has been adopted a lot of the industry. The mobile industry has adopted H.264, at least in 3GPP/3GPP2 standards. Adobe recently announced support for H.264 in their Flash product. However, the H.264 has licensing requirements that are incompatible with open source requirements and is not a royalty-free codec (which makes it incompatible with the goals of the W3C Patent Policy). Until recently, the HTML 5 specification was indicating that browser implementations suggested that browser implementations support the Theora codec. There are currently uncertainties about the IPR requirements surrounding the Theora codec, and organizations are concerned about taking additional submarine patent risks. Of course, one can claim that not all risks are known for the H.264 video codec either. It should also be noted that several patents around these video technologies expire every year. Other codec candidates to consider are Dirac, VC-1, or older codecs such as H.261 or H.263. Video codecs keep evolving and today's codec will be different from tomorrow's codec. The BBC submitted the intra-only portion of Dirac (“Dirac 1.0.0”) to SMPTE and it is expected to become a standard in the upcoming few months, as “VC-2”. BBC claims that it is suitable for professional applications. The BBC also released the full version of Dirac (“Dirac 2.1.0”) on 24 January 2008, which includes motion compensation and is suitable for broadcasting and streaming. The Joint Video Team (JVT) of ISO/ITU released a scalable profile of H.264 in the spring last year and started the work on H.265.

For large video content producers, the end user experience is more important than the goal of a single baseline codec. The end user experience includes widely available players, codecs whose functionality provides a good viewing experience, and other issues related to ease of use. Most will take Flash Video for granted in fact, or will deploy their own video player, using a codec that fits the desired quality of the content (such as the VP7 On2 codec). Individual content producers — the home video crowd — still suffer from the lack of a universally supported set of codecs, which makes it challenging to publish video on the Web and to be sure that almost anyone can view it in commonly available browsers. One should be able to publish a video the same way one publishes an image nowadays. The sentiment shared among several participants was that W3C should gather the relevant parties and continue to explore the issue. The participants of the Workshop did not discuss issues associated with audio codecs, mostly due to the predominant concerns with video codecs. Analogous audio codec issues also merit investigation.

5. Addressing into Video content

In order to make video and audio real first-class objects on the Web, one should be able to link from and to media, the same way authors can make hyperlinks between Web pages. It also allows other use cases, such as video highlights, search results, mashing, or caching. It also provides an identifier that can then be reused to attach metadata. The discussion covered spatial and temporal addressing, as well as a combination of the two.

5.1 Temporal Addressing

Temporal addressing provides the ability to reference a time point, or a segment of time, in video and audio content: a normal play time (or time offset), a framed-based time, or an absolute time. It allows the media player to jump to the specified time or frame, or only to play a segment of file. RFC 2326 (RTSP) defines the notion of normal play time, the stream absolute position relative to the beginning of the video. SMPTE time codes (SMPTE 12M-1999 Television, Audio and Film — Time and Control Code) defines the notion of frame-level accuracy. Absolute time uses UTC.

Temporal addressing approaches in SMIL, MPEG-7, MPEG-21 and temporal URI.

Both SMIL and MPEG-7 require an indirection: one needs to get the XML description containing the temporal information before fetching the video content. It should be noted, thought it was not mentioned at the Workshop, that even HTML 5 includes the notion of time offset and time segment.

On the other hand, the MPEG-21 and Temporal URI approaches rely on defining a URI syntax and thus don't rely on an additional XML description. However, these approaches also have limitations:

They lack the ability to represent complex fragments, especially when combining temporal and spatial addressing.

When using the fragment identifier component syntax of a URI, it is dependent on the media type of the retrieved representation (see section 3.5, Fragment, RFC 3986). For example, the MPEG-21 URI syntax is tied to the MPEG container. Thus, it would be difficult to apply one generic fragment identifier to the existing video or audio codecs.

5.2 Spatial Addressing

Spatial addressing provides the ability to reference a region in a video frame. Information can then be attached to the particular region or, when combined with timed-based addressing, objects can then be tracked in the video.

Three solutions were mentioned at the workshop: SVG, SMIL and MPEG-7. All solutions require an indirection for accessing the region in the video.

6. Digital Rights Management

Several of the participants at the Workshop were interested in the topic of Digital Rights management, an issue particularly important in the music and the motion picture and television industries. This topic touched on two subjects mentioned above: video codec and metadata. A baseline video codec and format container for online video content would only satisfy some of the content producers if it is associated with a digital rights management (DRM) solution. No one suggested that W3C should develop a DRM system but future work at W3C needs to keep DRM issues in mind. It was noted that the choice of video codec doesn't need to be tied to the choice of DRM system, since both technologies are independent in general. There was also some interest in investigating further the area of metadata for digital rights (copyright and licensing).

7. Other Topics of Interest

Other topics of interest listed in this section were mentioned or discussed at the workshop.

7.1 Accessibility

W3C has a mission to ensure that the Web is accessible to people with disabilities; naturally this includes video content. A vast majority of online video and non-professional content lacks captioning, sign language, or video description. The Web Content Accessibility Guidelines 1.0 were published in May 1999 and there are several solutions for captioning/video description currently available. Extensions have been made to support captioning using the W3C Timed Text (DXFP) format in combination with the Flash player, but DXFP itself is not yet a W3C Recommendation. The Flash Player 9 adopted 3GPP TS 26.245 as its Timed Text format. SMIL 3.0 introduced SMILText, which can also be used to captioning/subtitling.

7.2 HTML video tag

The HTML video tag was mentioned several times during the

Workshop. The primary focus of the discussion was around the baseline video

codec but several participants were concerned with the introduction of

yet-another-tag for embedding and controlling video content, especially since

the SYMM Working Group has already published a specification that addresses

timing and synchronization. The HTML Working Group introduced the notion of

cue range in their specification and care should be taken to ensure the

compatibility between the two models. No in-depth discussion happened at the

Workshop on this issue and the best recommendation at this time is to ensure

that the SYMM and HTML Working Groups coordinate their actions.

7.3 APIs for Controlling Video

The control of video content using APIs was a use case in several presentations and the . Both SMIL and HTML specifications are providing solutions to start, stop, or pause a video. Work is still happening in the HTML specification to extend the media API. A more extended API, the draft API for the Ogg Play Firefox plugin, was presented at the workshop.

7.4 Content Delivery and Network Traffic

The major issue of delivering video content over the Internet and the Web is network traffic scalability. While it's easy to deploy a Web server to deliver HTML pages and images, video content and its bandwidth requirements introduced an entirely new dimension. Progressive HTTP, Content Delivery Networks, streaming media servers, or P2P technologies are among the solutions with different cost of deployment and bandwidth. P2P technologies have a significant impact on the network of existing ISPs, forcing them to rethink the way they allocate the bandwidth among their users.

8. Next steps

Five major areas of possible work emerged from the Workshop (the first three discussed in detail): video codecs, metadata, addressing, cross-group coordination and best practices for video content.

8.1 Codecs and containers

The W3C team will work with interested parties to evaluate the situation with regards to video codecs, and what, if anything, W3C can do to ensure that codecs, containers, etc. for the Web encourage the broadest possible adoption and interoperability.

8.2 Metadata

Talks at the Workshop highlighted a number of metadata standards in the space (and the number is part of the problem). Still, there was high interest in this area. One direction would be to create a Working Group tasked to come up with:

- a simple common ontology between the existing standards;

- defines a mapping between this ontology and existing standards;

- defines a roadmap for extending the ontology, including information related to copyright and licensing rights.

8.3 Addressing

W3C should also consider creating a Group to investigate the important issue of addressing. The goal would be to:

- provide a URI syntax for temporal and spatial addressing;

- investigate how to attach metadata information to spatial and temporal regions when using RDF or other existing specifications, such as SMIL or SVG.

8.4 Best practices for video and audio content

A Group working on guidelines and best practices for effective video and audio content on the Web could be useful, and would look at the entire existing delivery chain from producers to end-users, from content delivery, to metadata management, accessibility or device independence.

8.5 Cross-group coordination

Several existing W3C Working Groups are working on video-related issues and there is a need for coordination (controls, layouts, etc.):

- Video and CSS (layout and layering);

- Video and SMIL, SVG or HTML (effects, transforms, codecs, playback, API);

- Video and WAI (accessibility)

- Video and browsers and plugins (for device independence and managing plug-n-play specialized codecs that match various end-user experience needs within the browser)