A vision of the opportunities and

the standards needed to enable them

Dave

Raggett <dsr@w3.org>

W3C Fellow on assignment

from Canon

Extending the Web to allow multiple modes of interaction

Augmenting human to computer and human to human interaction

Anywhere, Any device, Any time

Accessible to anyone

Convenient choice of modalities for every situation

Additional capabilities

Embedded or distributed architectures

Telematics — networking the car

Emergence of high resolution color displays integrated into the dashboard

Hands free access for driver

Must work in extremes of temperature/humidity

New frontier for mobile applications

Multimodal interfaces have benefits for

Use of mobile devices in office environment

Home PCs

Living room home entertainment systems

Games systems

Mobile devices

Other embedded devices

Initial work on requirements in Voice Browser WG

Joint MMI workshop with W3C/WAP Forum in 2000

W3C Multimodal Interaction WG launched in 2002

Member contributions on SALT, X+V

Work on use cases and requirements

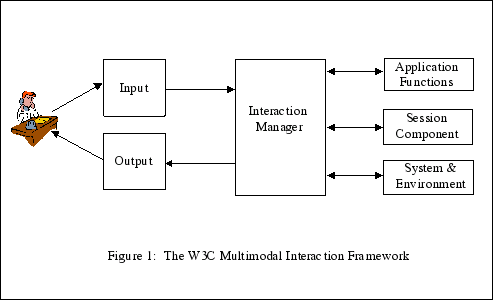

W3C Multimodal Interaction Framework

Draft specs for EMMA and InkML

MMI WG now in the process of rechartering

Access, Alcatel, Apple, Aspect, AT&T, Avaya, BeVocal, Canon, Cisco, Comverse, EDS, Ericsson, France Telecom, Fraunhofer Institute, HP, IBM, INRIA, Intel, IWA/HWG, KAIT, Kirusa, Loquendo, Microsoft, Mitsubishi Electric, NEC, Nokia, Nortel Networks, Nuance Communications, OnMobile Systems, Openstream, Opera Software, Oracle, Panasonic, ScanSoft, Siemens, SnowShore Networks, Sun Microsystems, Telera, Tellme Networks, T-Online International, Toyohashi University of Technology, V-Enable, Vocalocity, VoiceGenie Technologies, Voxeo

A level of abstraction above an architecture

A level of abstraction above an architecture

A level of abstraction above an architecture

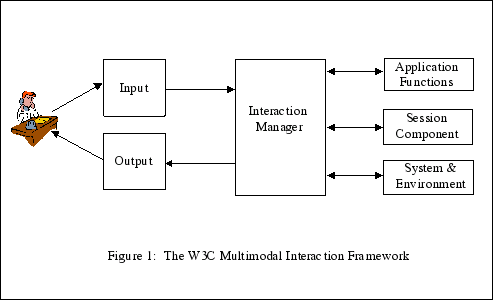

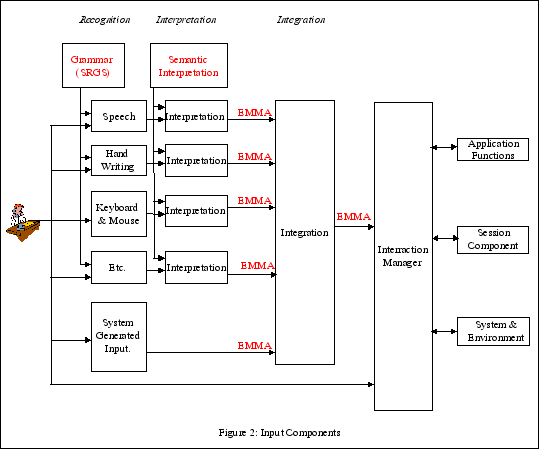

Extensible Multi-Modal Annotation

Supercedes earlier work on NLSML

Semantic Interpretation language

Designed for network and embedded use

Abstract software interface between I/O processor and host environment

Voice Browser WG for Voice/DTMF

MMI WG for ink and keystrokes

Use of grammars to constrain input

Interoperability challenge for pen gestures

Studying range of approaches to identify opportunities for standardization

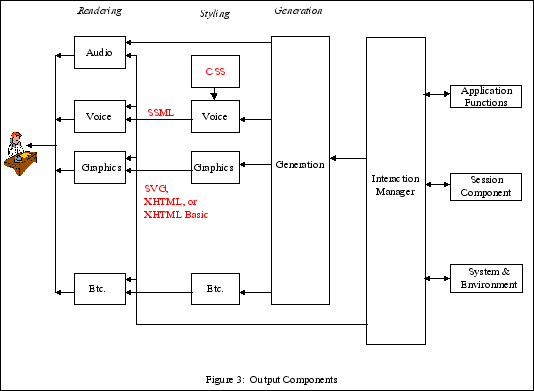

High level language or low level kernel?

Combination of speech and pen gestures

Studying a range of approaches

Adapting to device, user and environment

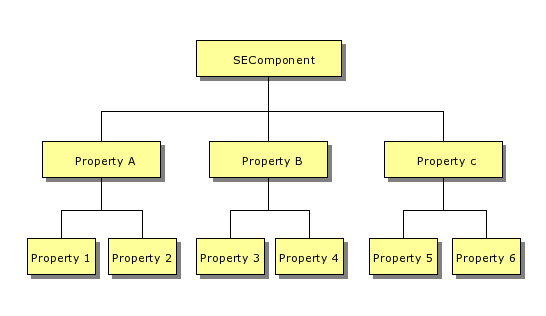

System and Environment framework

Namespaces isolate definitions from different organizations

Dynamic configurations and distributed applications present new challenges to Web developers

Sessions can help with:

Layering of concerns

XML transfer format for ink traces as part of multimodal applications

Allows server-side processing of

Developed with the help of Apple, Corel, Frauhofer Gesellshaft, HP, IBM, Intel and Motorola