Publishing

The concept of publishing on the web has evolved as the web's ecosystem has enlarged and diversified, and as the capabilities of browsers and the web standards that they implement have developed. There is no single definition of what publishing on the web means. Instead there are a number of activities that could be viewed as publication or distribution in a legal sense, or something else. This section describes each of these activities and how they work.

Hosting

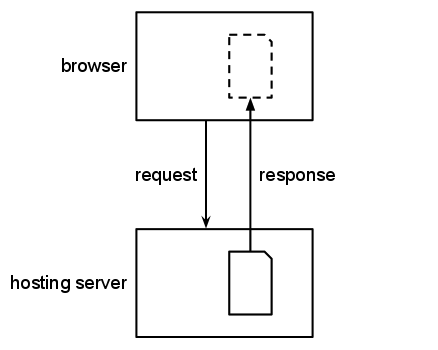

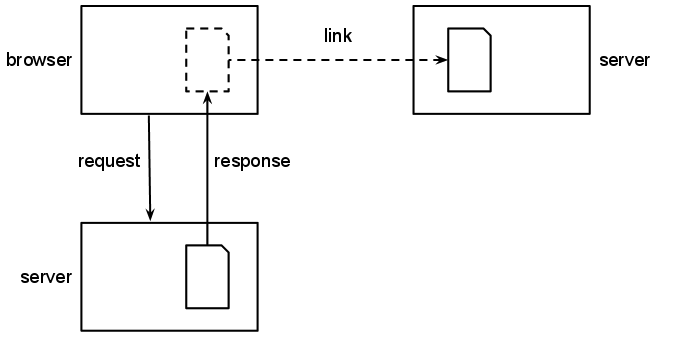

The basic form of publication on the web is hosting. A server hosts a file if it stores the file on disk or generates the file from data that it stores, and that file did not (to the server's knowledge) originally come from elsewhere on the web.

A browser makes a request to a hosting server for a file. The hosting server responds with the content of that file, which the browser stores in a local cache and displays to the user.

Hosting and Possession

Legislation that equated hosting illegal material with possessing illegal material would drastically curtail the ability of service providers to operate efficiently.

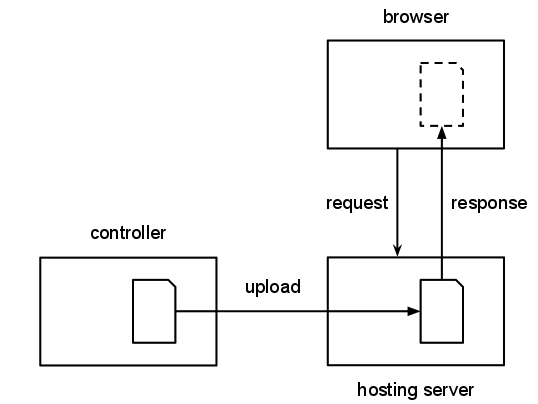

The presence of data on a server does not necessarily mean that the organisation that owns and maintains the server has an awareness of that data being present. Many websites are hosted on shared hardware that is owned by a service provider that stores and serves data for other controlling individuals and organisations which determine the data they provide on the site. Because of this, multiple servers may host the same file at different URIs. For example, an artist could upload the same image to multiple servers, which then store the image and serve it to others.

A controller uploads a file to a hosting server, which is then accessed by a browser.

There are many different types of service provider. Some may exercise practically no control over the software and data that they host but provide hardware on which code can run. Others may focus on particular types of content, such as images (eg Flickr), videos (eg YouTube) or messages (eg Twitter). There may be many service providers involved in the publication of a particular file on the web: some providing hardware, others providing different kinds of publishing support.

Licensing Transformation by Service Providers

Service providers should clearly indicate to controllers what transformations will be performed on their material.

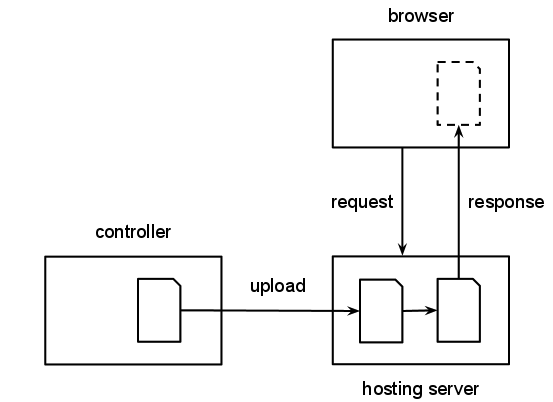

A controller uploads a file to a hosting server, which is then automatically transformed into a different format which is accessed by a browser.

Some service providers automatically perform transformations on material that they host, as a service, such as converting to alternative formats, clipping or resizing, or marking up text. When they sign up to a service, controllers explicitly or implicitly enter into an agreement with the service provider that grants them a license to perform transformations on the material which they upload.

Transformation of Illegal Material

Legislation that forbade transformations on illegal material would similarly limit the services that service providers could provide.

Service providers that host particular types of material often employ automatic filters to prevent the publication of illegal material, but it is impossible for a service provider to detect and filter out everything that might be illegal. If a service provider automatically transforms that material as part of its service, and illegal material is not successfully filtered out, automatic processing (including the transformation) of files will still take place.

To add to the complexity of this area, it is possible for each of the following to be in different jurisdictions:

- the individual or organisation who controls the data

- the service provider(s)

- the physical servers that host the data

Caching and Distributing

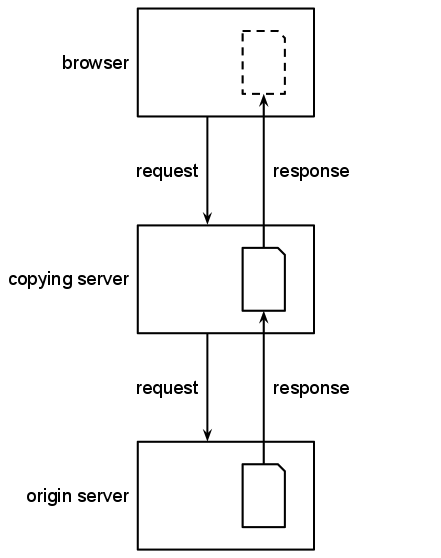

Some servers provide access to files that are hosted elsewhere on the web, on an origin server that holds the original version of the file. These files might be stored on the server and provided again at a later time, in which case for the purposes of this document it is termed a caching server, or might simply pass through the server in response to a request, in which case for the purposes of this document it is termed a distributing server.

A browser requests a file from a server; it responds with a document that it has fetched from an origin server.

It is usually impossible to tell whether a server is providing a stored response or has made a new request to an origin server and is serving the results of that request. Servers commonly store the results of some requests and not others, acting as a caching server some of the time and as a distributing server the rest.

In both cases, the file the caching or distributing server provides may be different from the original one that it has accessed from the origin server. For example:

- links within an HTML page may be rewritten so that they point to pages that are also served by the caching server or distributing server

- Javascript and CSS files may be combined and compressed to provide speedier access

- banners may be added within an HTML page to highlight that the page is a copy of an original from somewhere else

- files may be compressed or converted to different formats

- wholly new documents may be created that bring together information from multiple different sources

Caching and distributing servers are extremely useful on the web. There are four main types of caching and distributing servers discussed here: proxies, archives, search engines and reusers. The distinctions between them are summarised in the table below.

| proxy | archive | search engine | reuser | |

|---|---|---|---|---|

| purpose | increase network performance | maintain historical record | locate relevant information | better understand information |

| refreshing | based on HTTP headers | never | variable | based on HTTP headers |

| retrieval | on demand | proactive | proactive | usually on demand |

| URI use | usually uses same URI | uses new URI | uses new URI | uses new URI |

Proxies

A proxy is an application that sits in between a server (such as a website) and a client (such as a browser). On the web, caching proxies are often used to speed up users' experience of the web by caching pages and other resources onto the proxy server so that they can be accessed more quickly, directly from the proxy rather than from the origin server. Some proxies, particularly those used by mobile operators, perform other actions on the content that passes through them, such as rewriting or merging Javascript to make it faster to access.

Caching by Proxies

Legislation and licenses that restrict copying may consequently prevent caching by proxies which would make the Web slower.

Transformation by Proxies

Legislation and licenses that restrict the changing of data by proxies may also slow down the web, particularly in low-bandwidth situations.

Controlling Proxy Behaviour

Proxies should comply with instructions from origin servers that describe whether pages may be copied and transformed, but will only be able to comply with those that are machine-readable.

Proxies come in four general flavors:

- Forward proxies serve a given community, such as a company. The browsers of the members of that community are set up to use the proxy, and whenever they fetch a resource, the request is routed through the proxy. If the proxy already has a cached copy of the resource, it returns that copy rather than forwarding the request on to the origin server. If many people within the community are requesting the same pages, this can speed up their access and lower the bandwidth use of the community. The proxy can also be used to prevent access to unsuitable sites and to carry out virus checking on the content.

- Reverse proxies sit in front of a (private) server and cache responses from that server. This can reduce the load on the server and speed up responses, which is particularly important when the response to a request takes time to compute. An extreme version of this is a content-delivery network (CDN) which operates a set of proxies and directs requests to the nearest of these, saving transmission time as well as processing time.

- Gateways are proxies which usually do not cache the result of a request: they simply pass on the request to an origin server and pass the response back. Gateways may be used to prevent access to particular parts of the web, or to bypass those blocks. For example, a gateway may be set up that prevents access for users in a particular country to particular websites; another gateway might be set up, at a non-blocked location, that enables people within that country to access those websites by mapping requests on that gateway to requests on the blocked website.

- Transforming proxies are proxies which transform the Web documents being delivered through them to address issues such as presentation issues in order to speed up access to those documents (often these are used to compress content, for example) [reference to Mobile Web Best Practices working group work on best practices for transforming proxies].

The use of a forward proxy, gateway or transforming proxy may be configured either on an individual machine or transparently for a particular network. Users may have no idea that their requests are channelled through a given proxy, or they may have configured their set-up to use the proxy.

Reverse proxies appear to be normal servers to users: it is impossible for a user to tell that their request is actually passed on to a completely different origin server, or where that server is. This is intentional as the origin server in this case is a private one.

To improve performance, some proxies, particularly CDNs, may pre-fetch resources that a page includes, since these resources are likely to be requested by the browser soon after the page is viewed. In other words, although generally the contents of a proxy's cache will be determined by the requests that users of that proxy have made, the proxy might also in some cases contain content that no one has ever requested.

Archives

Archives aim to catalog and provide access to some portion of web content to provide an on-going historical record. They use crawlers to fetch pages and other resources from the portion of the web that they cover, and store them on their own servers, along with some metadata about the pages, particularly when they were retrieved. They then provide access to the stored copies of the resources at particular historical dates, enabling people to see how pages used to appear.

Archiving by Archives

Legislation and licenses that restrict copying may consequently prevent archiving and therefore prevent archives from storing an accurate historical record of the web.

Transformation by Archives

Legislation and licenses that restrict the rewriting of links by archives make them harder, or even impossible, to use.

Controlling Archive Behaviour

Archives should comply with instructions from origin servers that describe whether pages may be copied and transformed, but will only be able to comply with those that are machine-readable.

Archives are usually run by institutions that have a legal mandate and responsibility to keep this historical record, such as a legal deposit. Although their primary purpose is long term record-keeping, they often make this material available online as well. While they might restrict access to the data for a period of time after it is collected, for security or privacy reasons, it is not usually possible to remove information from an archive. Users might use archives for research, but also to access information that has otherwise been removed from the web.

Archived pages are usually distinguishable by end users from the original page using banners placed within the page or having the original page appear within a frame. The links (both to other pages and to embedded resources such as images) are usually rewritten so that when the user interacts with the page, they are taken to the version of the linked resource at the same point in time.

Search Engines

Search engines aim to catalog and provide access to as many web pages as they can, so that they can direct users to appropriate information in response to a search. They use crawlers to fetch pages and other resources from the web, analyse them and store them on their own servers to support further analysis.

Copying by Search Engines

Legislation and licenses that restrict copying by search engines prevent information from being easily found by users.

Transformation by Search Engines

Legislation and licenses that restrict the transformation of material by search engines prevent them from using the information within the page to provide relevant information to people searching.

Controlling Search Engine Behaviour

Search engines should comply with instructions from origin servers that describe whether pages may be copied and transformed, but will only be able to comply with those that are machine-readable.

Search engines are most interested in indexing resources and providing links to them rather than in the content of the resource itself. They might not copy the page itself, but they always store metadata about the page, derived from the information in the page itself and other information on the web, such as what other pages link to it.

Search engines play an important role in the web in enabling people to find information, including that which would otherwise be lost or is temporarily unavailable. When a user views a stored page from a search engine, it is usually obvious both that the search engine is involved (from the URI of the page and from banners or framing), that the content originally came from somewhere else, and where it came from. The links within the page are not usually rewritten.

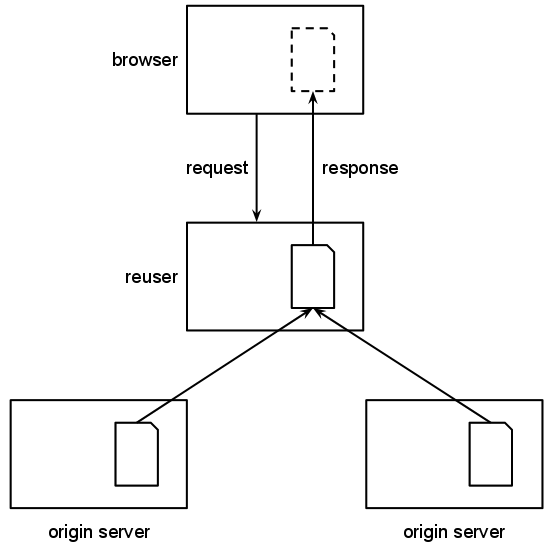

Reusers

Data reuse is becoming more prevalent as web servers act as services to others. A server that is a reuser fetches information from one or more origin servers and either provides an alternative URI for the same page or adds value to it by reformatting it or combining it with other data. Good examples are the BBC Wildlife Finder, which incorporates information from Wikipedia, Animal Diversity Web and other sources or triplr.org, which converts RDF data from one format to another as a service.

A browser requests a document, which is constructed by the reusing server requesting information from two origin servers

Controlling Reuse

Reusers should comply with instructions from origin servers that describe whether pages may be copied and transformed.

Attributing Reused Material

Reusers should indicate the sources of the information on pages, for both humans and computers.

Reusers that do not change the information from the origin server may be used to simplify access to the origin server (by mapping simple URLs to a more complex query) or to provide a route around gateways or the same-origin policy (as servers are not limited in where they access resources from).

Since reused information is, by design, seamlessly integrated into a page that is served from the reuser, people viewing that page will not generally be aware that the information originates from elsewhere. The URIs used for the pages will be those of the reuser, for example. Licenses on the material may require attribution; even when it doesn't, it is good practice for reusers to indicate where the material originates.

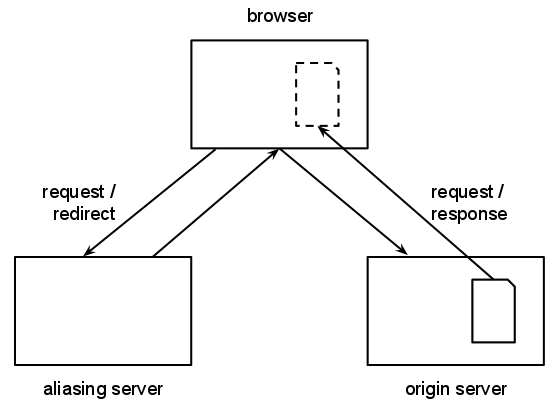

Aliasing

An alias is a URI that points the browser to another URI on an origin server. A server can automatically redirect a browser (using a HTTP 3XX status code and a Location header). Web pages from a server can do the same thing using a <meta> element with an http-equiv attribute set to Refresh; this technique is often used with a slight delay to indicate to the user that they are being redirected to another page.

A browser requests a file from an aliasing server; it responds with a redirection to a document hosted on an origin server and doesn't send any content itself

Redirecting to Illegal Material

Aliases mean that users could easily fall foul of legislation or licenses that banned access to particular information, because it is easy to put in place URIs that appear innocent but redirect to the banned information.

Aliases do not involve any of the information from the origin server passing or being stored by the redirecting server, but the redirecting server will be able to record when a particular URI is requested.

Although it is preferable to only have one URI for a particular resource, redirections are a useful mechanism for managing change on the web. They are used within websites when the structure of the website changes, or between websites when a new website is created that supersedes the first, or to archived information when a host no longer wants to provide access to a file itself.

Redirections are also used to provide other services. Link shorteners provide a short URI for a resource that is then redirected to the original URI, and are useful in locations where space is limited such as in print or on Twitter. Depending on their implementation, link-tracking services can use a similar technique to enable servers to analyse which links are followed from their site: the link tracker records the request and redirects the user to the true target page.

When aliasing is used, users may not be aware about the eventual target of a link, or the involvement of an aliasing server, both of which are important. Shortened links, for example, hide the target location behind a URI that often has no visible relationship to the eventual destination of the page. Some implementations of link tracking do not change the original destination of the link (such that the status bar on a browser shows the eventual target of the page) but instead use the onclick event to direct the user to the aliasing server.

Following a redirection, browsers change the address bar to the new location, but this is often the only indication, so users may or may not be aware of this happening.

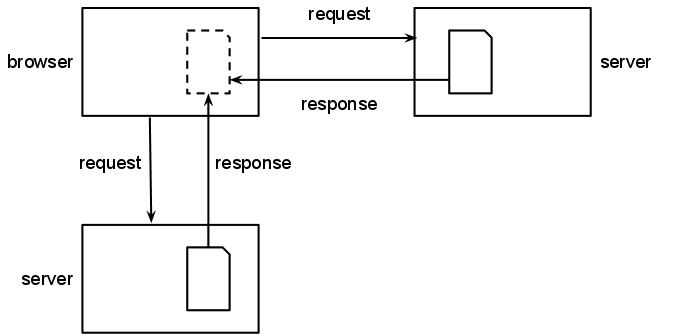

Including

Web pages typically rely on many resources other than the HTML in which the page is written, such as images, video, scripts, stylesheets, data and other HTML. The HTML in a web page refers to these external resources in markup, for example, an <img> element uses the src attribute to reference an image which should be shown within the page. Material that is included within a web page may appear to be a hosted copy to the user of a website, but in fact be hosted completely separately, outside the control of the owner of the web page.

A browser requests a file from a server; instructions in the page tell the browser to request another resource from a different server

Controlling Inclusion

Includers should comply with computer and human-readable instructions from origin servers that describe whether and how resources may be included within a page.

Attributing Inclusion

Those who include third-party resources within their web pages should indicate the sources of the information on such page for both humans and computers.

HTML supports several different ways of including other resources in a page, which are listed in , but they all work in basically the same way. When a user navigates to a web page, the browser typically automatically fetches all the included resources into its local cache and executes them or displays them within the page.

Inclusion is different from hosting, copying or disseminating a file because the information is never stored on, nor passes through, the server that hosts the web page doing the including. As such, although the included resources are an essential component of the page to make it appear and function as a whole, the server of the web page does not have control over their content.

Users may not be aware that included resources are used within a page at all. When included resources are embedded within the page such that they are visible to a user, it won't be clear that an image or video is from a third-party website rather than the website that they are visiting unless this is explicitly indicated within the content of the page.

A resource that is included into a popular page causes a large number of requests to the server on which the file is published, which can be burdensome to the third-party who hosts the file. Publishers who intend their files to be reused in this way therefore typically have terms and conditions that apply to the reuse of those files and may have to put in place technical barriers to prevent it.

As with normal links, included resources may or may not have the same origin as the page that includes them. Resources such as images and scripts that are included within the web page may be from any site. However, browsers implement a same-origin policy which generally means that third-party resources cannot be fetched and processed by scripts running on the page, for example through XMLHttpRequests [[XMLHTTPREQUEST]] (though typically these scripts can write markup into the page which includes such resources).

Inclusion Chains

When scripts or HTML are included into web pages, the included resource may itself include other resources (which may include still more and so on). The author of the original web page has control over which resources it includes, but will not have control over which resources those included resources go on to include.

Whatever level of remove from the origin page, the publishers of included resources may change the content of those resources at any time, possibly without warning. This has been used in cases where websites included third-party images without permission, to substitute the image with something distasteful or to redirect to a link that performed an action on the user's behalf; see Preventing MySpace Hotlinking.

Hidden Requests

Some of the resources that are included within a page may be invisible to the user. An example is a hidden image that is used for tracking purposes: each time a user navigates to the page, the hidden image is requested; the server uses the information from the request of the image to build a picture of the visitors to the site.

This facility can be used for malicious purposes. An <img> element can point to any URI (not just an image) and causes a GET request on that resource. If a website has been constructed such that GET requests cause an action to be carried out (such as logging out of a website), a page that includes this "image" will cause the action to take place.

Linking

Linking is a fundamental notion for the web. HTML pages use <a> elements to insert links to other pages on the web, with the href attribute holding the URI for the linked page. Some of the links will be to be pages from the same origin; others will be cross-origin links to pages on third-party's sites that hold related information.

A browser requests a file from a server; instructions in the page link to other resources, but the browser does not retrieve that resource until told to do so

Permitting Linking

Legislation and licenses that forbid linking to particular pages on the web undermine the central mechanism for the way the web works.

Controlling Linking

Websites cannot prevent others from linking to their pages (addressing a page within another page) but can prevent access to those pages when they are accessed through those links.

Linking to Illegal Material

Website owners could easily fall foul of legislation or licenses that banned links to pages that contain illegal information, because they are not in control of the information on the linked pages.

A user can usually tell where a link is going to take them prior to selecting (clicking, tapping, etc...) it through the browser UI (e.g. by "mousing over" it) or after the link is selected through the status bar in the browser, although some links are overridden by onclick event handling that takes them to a different location. Some websites, such as Wikipedia, use icons to indicate when a link is a cross-origin link and when it will take a user to a page on the same server. The use of intersticial pages or dialog boxes which warn the user they are about to leave the site in question can obscure the eventual destination of the link, as discussed in .

If the link is a cross-origin link (or even in some cases where it is an internal link), the publisher of the origin page will have no control over the content or access policies of the linked page. These are the responsibility of the publisher of that page; the TAG Finding on "Deep Linking" in the World Wide Web [DEEPLINKING] describes the ways in which publishers can control access to their pages and the fundamental principle that addressing (linking to) a page is distinct from accessing it.

Legal issues related to linking

As described above, in the context of the architecture of the World Wide Web, in linking from one page to another, a Web page author is referring to a part of the linked-to website or service. These links, as accessed through and processed by browsers are designed to be public identifiers. The existence of the link does not imply the right to access, and websites are free to use any one of many access control techniques to restrict access. Hence, linking is a “speech act”. It is the opinion of the TAG that linking should therefore enjoy the same protections enjoyed by any other type of protected speech.

Freedom of expression (speech) is a right enshrined under Article 19 of the Universal Declaration of Human Rights. Countries that adhere to this idea of freedom of expression implement it in differing terms. However, since linking constitues a type of expression, in as much as there is a right to freedom of expression, this right encompasses the right to link.

Linking Out of Control

Traditionally, a user must take a specific action in order to navigate to the linked page, such as by clicking on the link or selecting it with a keystroke or a voice command. In these cases, the linked page cannot be accessed without the user's knowledge and consent (though they may not know where they will eventually end up).

Page Access might not be Purposeful

The appearance of a page within a browser's cache does not necessarily mean the user purposefully navigated to a page.

There are three practices used by some sites that mean that users do not necessarily have control over whether a link is followed:

- Browsers can be made to navigate to another page using scripted navigation, which may simply run automatically (navigating the user to another page after a period of time, for example) and can hide the location of a link, such that users don't know where they will be navigating to. The HTML5 history API [[HTML5]] enables a script to change the address bar, which can mean that the address bar does not reflect the actual location of the page (although this use is limited to locations that have the same origin as the original page).

-

Browsers might pre-fetch resources that seem likely to be visited next, so that the target page is loaded more quickly when the user accesses it. A page can indicate which links should be pre-fetched using the

prefetchlink relation in a link. For example, a page might indicate that the first result in a list of search results should be fetched before the user actually navigates the link. - Browsers may support offline web applications [[HTML5]] which direct browsers to a manifest that lists files that the browser then downloads so that the web application can be used when an internet connection is not available.