ABSTRACT

Graphics and multimedia available on the Web have important roles in explaining, clarifying, illustrating, drawing attention to, grouping, and structuring information. With some effort and awareness of issues it is possible to make this information available for all users, including users with disabilities. The World Wide Web Consortium's (W3C) Web Accessibility Initiative (WAI) supports this goal by providing guidelines for accessible Web content, authoring tools and user agents. In addition, people designing the markup languages need to be aware of the accessibility issues in context of user experience. This paper presents a few easily remembered heuristics for designers of markup languages based on accessibility themes and principles of the WAI guidelines, and W3C Notes on accessibility features of graphics and multimedia languages.

Keywords

Accessibility, design heuristics, multimedia, graphics, design process, Web.

1. INTRODUCTION

Multimedia and graphics help users focus on important information, help them understand complicated concepts, provide concrete examples, visualize large models that are hard to understand otherwise, and provide engaging hands-on experience. Images, sound, animation, live video, different 3D models and interactive elements have become a normal part of the Web. They are often indispensable for users, particularly users with cognitive disabilities, who may not be able to easily understand complicated textual content. However, they can also create barriers for other users, such as blind users.

When multimedia and graphics are made accessible, the number of potential users increases considerably. Not only can users with disabilities access the material, but also users with nontraditional devices or users in noisy or mobile environments. Furthermore, accessibility often increases usability as well.

Web Accessibility Initiative (WAI) has developed several guidelines for accessibility: Web Content Accessibility Guidelines 1.0 (WCAG) [20], User Agents Accessibility Guidelines 1.0 (UAAG) [5], and Authoring Tools Accessibility Guidelines 1.0 (ATAG) [19]. These guidelines have gone through extensive review while working groups including disabled users, disability organizations, software developers, researchers, and experts in accessibility, usability, Web design, and different Web technologies and application domains developed them.

Each markup language has its own set of techniques for reaching accessibility. When new, specialized markup languages and user agents to display them are developed for multimedia and graphics, it is important that they provide necessary means to support accessibility. The language designers are not always deeply knowledgeable in accessibility and need help to keep in mind accessibility requirements. Similarly HTML Web page designers benefit from WAI Quicktips that provides them a short and easily digestible reminder until they thoroughly know the full guidelines.

To help the designers of specialized multimedia and graphics languages, we developed a few simple heuristics that can help them remember and understand the core demands for accessibility. We selected the heuristics based on the themes and principles of accessible design and the experience gained from writing the Accessibility Notes for Scalable Vector Graphics (SVG) [10], and Synchronized Multimedia Integration Language (SMIL) [9]. In addition, we extrapolated our experiences for 3D type interfaces. As the heuristics look at the user experience as a whole, they may also help Web tool and page designers while they are getting familiar with the WAI guidelines.

We first examine the design themes and principles that can be found in the WAI guidelines. Then we present the heuristics that are generated based on the experience of presenting accessibility features specific to SMIL and SVG and XML languages in general.

In the end we also examine how accessibility heuristics and guidelines could be employed in a usability process. Our aim is that accessibility becomes an integral part of usability methods and of the design process.

2. ACCESSIBLE DESIGN THEMES AND PRINCIPLES

The user's experience of a Web page comes both from the design of the page as well as from the user agent that is used to view the page. Therefore we base these accessibility heuristics on themes in Web Content Accessibility Guidelines (WCAG) [20] and design principles in User Agent Accessibility Guidelines (UAAG) [5]. The themes and principles provide a good abstraction level for developing accessibility heuristics. They are explained briefly in the following paragraphs.

The two general WCAG themes are 1) "Ensure graceful transformation", and 2) "Make content understandable and navigable". The first theme includes the following concepts:

- Separate structure from presentation.

- Provide text (including text equivalents). Text can be rendered in ways that are available to almost all browsing devices and accessible to almost all users.

- Create documents that work even if the user cannot see and/or hear. Provide information that serves the same purpose or function as audio or video in ways suited to alternate sensory channels as well. This does not mean creating a prerecorded audio version of an entire site to make it accessible to users who are blind. Users who are blind can use screen reader technology to render all text information in a page.

- Create documents that do not rely on one type of hardware. Pages should be usable by people without mice, with small screens, low resolution screens, black and white screens, no screens, with only voice or text output, etc.

The theme is further developed into WCAG guidelines 1-11. Similarly, the other main WCAG theme "Make Content Understandable and Navigable" is developed into guidelines 13-15. It includes the following issues:

- Make the language clear and simple

- Provide understandable mechanisms for navigating within and between pages.

- Provide navigation tools and orientation information in pages to maximize accessibility and usability. Orientation information is especially important for non-sighted users who cannot make use of visual cues or users who lose contextual information as they access the page only one word at a time (speech synthesis or Braille display) or one section at the time (small or magnified display).

The UAAG 1.0 design principles are written as guidelines:

- Support input and output device-independence.

- Ensure user access to all content.

- Allow configuration not to render some content that may reduce accessibility.

- Ensure user control of rendering.

- Ensure user control of user interface behavior.

- Implement standard application programming interfaces.

- Observe operating environment conventions.

- Implement specifications that benefit accessibility.

- Provide navigation mechanisms.

- Orient the user.

- Allow configuration and customization.

- Provide accessible product documentation and help.

In summary, these principles ensure access to all content and its structure, provide user control of the presentation, provide navigation mechanisms and orientation to the users, and ensure that content and user agent information is available to assistive technologies.

The presented themes and principles enhance accessibility but are not written in a form of a short list of accessibility heuristics that is easy to remember and keep in mind when designing new graphics or multimedia languages.

3. ACCESSIBILITY HEURISTICS

We first started selecting general principles that would help to explain the accessibility features of specific Web languages in an easy to understand use context. The first language specific Accessibility Note for SMIL originated some changes to the next version of the language. The Accessibility Note for SVG was written while the WG was refining the language so in some places it has been incorporated into SVG as a specification and in other cases referred to as how to produce SVG.

Based on the Accessibility Notes and the themes and principles described in the previous section we came up with six accessibility heuristics and their explanations. Our goal is that these heuristics are easy to remember and understand so that they can help designers of new markup languages to keep accessibility in their minds. The heuristics are presented in the following.

Heuristic 1: Provide alternative equivalents to make information suitable for auditive, visual, and tactile channels. In that way it is possible to follow the presentation even if a user has limitations with some senses, some cognitive limitations or the device cannot handle some media very well.

As text is easy to create and easy to transfer to almost any sense with the help of assistive technologies it can usually be used as an alternative to other media. For instance, assistive technology for a blind user can easily change an image with alternative text to voice or Braille format. With timed material, such as animations and video, the text often needs synchronization and therefore it might be easier to provide it also directly in audio. Section 3.1 explains more about alternative equivalents and their use.

Heuristic 2: Provide means to select equivalent content. Users should be provided flexible means to access the equivalent content in any combination that is most suitable for them because of their disabilities or the limitations of the used devices.

Normally these means are provided by user agents but also some languages provide switches for selecting content. If author provides the default selections, he should make sure that nothing in his design prevents flexible user control. Sometimes the defaults can also be automatically negotiated.

Heuristic 3:Provide user control for presentation by separating it from the rest of the content. This benefits users with disabilities or devices with limited capability.

For instance, a blind user may want to define that emphasized text is read in a louder voice, or a user with low vision can change the fonts to a larger size and use colors that have more contrast. This principle can be implemented by using style sheet technology. It is discussed more in Section 3.3.

Heuristic 4: Provide device independent interaction so that users with different input and output devices can easily get to all the available functionality.

This is often reached by using a user agent that can provide access to the functions by emulating mouse. However, it is good to provide shortcuts that get users to functions without any need to use mouse or spatial positioning. This is hardest when walking in a 3D world, but doing it might also help others not so familiar with 3D navigation with a mouse.

Heuristic 5: Provide semantics for structure. This helps provide alternative ways for user navigation and orientation. This can also help the use of alternative presentations. Use authoring tools that support this.

Semantics can be provided by using the elements of the language in a correct way and by describing the site and page navigation and the structured components with other available means. The languages usually include general grouping elements that can be used to add semantics. The other means include the use of class hierarchy and semantic languages, such as Resource Description Framework (RDF) [11]. These are discussed in more detail in Section 3.5.

Heuristic 6: Provide reusable components. This helps users who use a media that makes it more laborious to compare the components.

Multimedia often contains application-defined components that are repeated several times. Especially graphics components might be repeated but also some video sequences or houses or other objects in a 3D model might contain the same elements. A user who is examining a structured image visually can do it much faster than a blind user navigating through the structure and the equivalent alternative explanations or even the graphical components. Reuse of components saves time as a model component can be examined only once.

3.1 Provide Alternative Equivalents

All the multimedia content needs to be inherently suitable for visual, auditive and tactile channels or have alternative equivalents that make it suitable. The markup languages need to offer means for including the equivalents into the language and associating them to primary content.

Text equivalents are fundamental to accessibility since they may be rendered graphically, as speech, or by a Braille device. They are also easy as they are discrete in nature; they don't contain time references or have an intrinsic duration. They are typically specified by attributes such as the alt or longdesc attribute of the img element in XHTML and SMIL [6] or title and desc elements in SVG [4] or as part of an XHTML object element. The use of elements is preferred as they enable the attachment of class id's, styles, and other information to the equivalents.

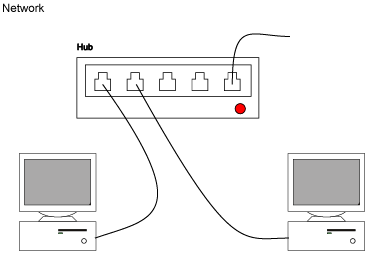

Figure 3.1 shows an SVG image of a Network with a) a graphical rendering and b) rendering of the text equivalents.

Figure 3.1a: An image of a network with embedded structure.

Network An example of a computer network based on a hub

Hub A typical 10baseT/100BaseTX network hub

Computer A A common desktop PC

Computer B A common desktop PC

Cable A 10BaseT twisted pair cable

Cable B 10BaseT twisted pair cable

Cable N 10BaseT twisted pair cable

Figure 3.1b: Alternative equivalents describing the components of the network in Figure 3.1a. With additional semantics connections between components can be added.

Multimedia presentations often include continuous equivalents, such as text captions, which describe the spoken dialog and sound effects as text, or auditory descriptions, which is a sound track, describing what is happening in the video visually. Continuous equivalents have intrinsic duration and may contain references to time. For instance, a continuous text equivalent consists of pieces of text associated with a time code. They need to be synchronized with other time-dependent media. Continuous equivalents may be constructed out of discrete equivalents, for instance by using the SMIL timing features.

Figure 3.2. Image of a WBGH tutorial video with captions.

Figure 3.2 presents an example of an educational video explaining Einstein's theory of the relativity by using video and sound. The video was created by WBGH National Center for Accessible Media [16]. Text captions that are located under the video window describe the content of the original soundtrack as text. In addition, the video has also an audio description. The user can control the playing of the captions and the audio description from the player user interface.

Other forms of continuous equivalents not explicitly required in WCAG 1.0 may also promote accessibility of multimedia and graphics. Representations of sign language benefit people with auditory limitations and simplified sound tracks may help people with some cognitive difficulties.

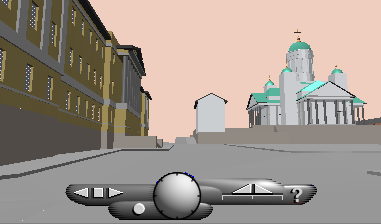

The 3D worlds need alternative equivalents too, but there are not many examples yet. In 3D the spatial location of the equivalent alternatives becomes important. A blind user turning his head around in a 3D world, such as near the Helsinki Senate Square in Figure 3.3, may not see around but may want to hear what is around him e.g. Helsinki Senate Square, Helsinki Lutheran Cathedral, Unioninkatu street and the Main Building of Helsinki University. We could provide discrete equivalents for the houses and geological constructs, such as hills or rivers. They could also be tied to a certain place or range of places, such as paths through the world.

In 3D we may also need to let user define the level of detail and the information categories e.g. what kind of buildings and what stores exist, and how far located equivalents are read when the user turns. For instance, sometimes the user may want to know generally what exists in the city in a certain direction and not just about the buildings that exists in the visual view.

Figure 3.3: Image of Senaatintori taken from a 3D model of Helsinki.

3.2 Provide Means to Select Equivalent Content

Every user is different and therefore may have different needs to see the content with different media at different times. There might be users who can only use the media that is suitable for audio or users with devices only capable of showing small amounts of text at a time. Users' should be able to select equivalent content suitable for their needs. This can be achieved by including appropriate features to markup languages as well as to user agents.

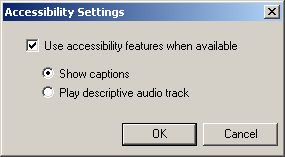

Figure 3.4: Selecting text captions or audio description for a video with Real Player.

Technologies such as SMIL offer a lot of control for the authors to decide which media to show at what time and where in a visual layout. In addition, the design should be able to accommodate the user preferences as otherwise it may be impossible for many users to get to the content. For instance, if the user wants to see captions the design should allow that, whether the author has designed an alternative layout for embedding the captions in the user interface or not. The captions benefit not only users with hearing disabilities, but also users who have problems in understanding the spoken language or who are in a noisy environment.

Both SMIL and SVG let authors design alternative user interfaces with the switch technology. This technology can check the values of attributes set by the user or provided by the user agent. Furthermore, the normal features of SMIL language can be used for adding equivalent content. Unfortunately, in that case the user does not have the choice of selecting between different contents. If the different media tracks could be identified and provided to the user agents the user could select combinations of tracks not predicted by the author.

With SVG authors can provide information on graphical components in different levels with title and descelements. When the information is available the user agent or a player can let the user select the level in which it is provided. The selection could be done with a style sheet or by providing an interface to do that through the user agent.

In 3D interfaces, the selection of alternative equivalents becomes even more complicated. It is difficult for the author to provide alternatives on all possible abstraction levels. However, some default alternatives can be provided. For instance, an author could provide an alternative equivalent for ready-made tours through a model. When alternative information is constructed and labeled automatically from information attached to components in the 3D world, the user should be able to select the level of detail preferred and from which areas the information should be provided.

3.3 Provide User Control for Presentation

Users need to be able to control how the information is presented so that they can adapt the rendering to meet their specific needs. A user who cannot see small blinking text or read red text on a green background should be given the option of changing the colors or font size and stopping the blinking so that he can get to the information that otherwise would have been outside his reach.

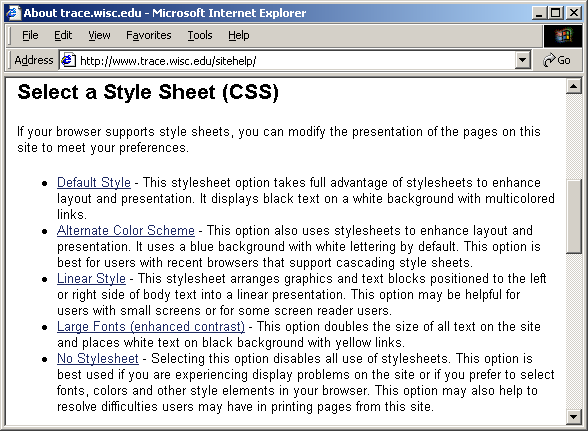

Part of the user control can be provided by separating the presentation from the structure and content in the language with style sheet technologies, such as CSS [13, 14] or XSL [1]. The other part should be provided by the user agent. Some of the control can be quite automatic from the user point of view, as the user agent is interpreting the current user settings and applying them, and some settings are selected only when problems appear.