Inclusive User Modelling and Applications

- Pradipta Biswas & Pat Langdon, University of Cambridge, UK, pb400@cam.ac.uk

Introduction

Let us consider an elderly user just brought a new computer and started to use it. He has mild age-related visual impairment and tremor in finger. It will be easier for him if the font sizes and inter-button spacing can be increased. However it needs to deal with the settings, which are not easily accessible for him and it is also difficult to find a manual to do so. Now imagine a situation where the system understands its user and automatically adjusts the settings as soon as he logs in the system. It will be further beneficial if these settings can be seamlessly applied to all other electronic devices like his TV and smart-phone, too. Change of these settings often needs slight tweaking of the design like changing colour contrast, increasing fontsize, changing layouts of buttons and can make them far more usable as well as increase the market coverage of the products.

Literature Survey

User modelling often complements existing accessibility guidelines [11] helping designers to automatically adapt user interfaces. The SUPPLE project [10] personalizes interfaces by changing layout and font size for people with visual and motor impairment and also for ubiquitous devices. However, the user models do not consider visual and motor impairment in detail and thus work for only loss of visual acuity and a few types of motor impairment. The CogTool system [6] combines GOMS models [5] and ACT-R system [1] for providing quantitative prediction on interaction. The system simulates expert performance through GOMS modelling, while the ACT-R system helps to simulate exploratory behaviour of novice users. The system also provides GUIs to quickly prototype interfaces and to evaluate different design alternatives based on quantitative prediction. However it does not yet seem to be used for users with disability or assistive interaction techniques. Quade’s [8] simulation uses a probabilistic rule based system to predict the effect of sensory or motor impairment on design, but it does not model the detail of perceptual and motor abilities like our simulation system. The probabilistic rule based system does not seem to be validated for users with age-related and different types of physical impairment (visual, hearing and motor) as like our system. The Global Public Inclusive Infrastructure (http://gpii.net/) and the EU Cloud4All projects do not have a user performance simulation and works mainly based on users’ explicitly stated preferences. The use of the performance simulation with sufficient validation will help to cover a wider range of users than existing systems and reduce the development time for any new interface as designers can run initial validation without conducting long user trials.

Inclusive User Model

We have identified a set of human factors that can affect human computer interaction and formulated models [2,3] to relate those factors to interface parameters. We have developed inclusive user model, which is more detailed than GOMS model [5], easier to use than Cognitive Architecture based models [1, 7], and covers a wider range of users than existing user models for disabled users. The user model is implemented through a simulator and a set of web services.

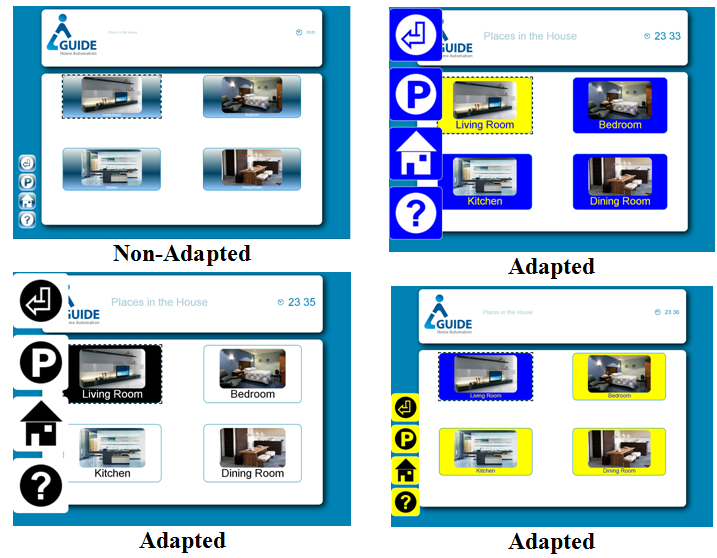

The simulator takes a task definition and locations of different objects in an interface as input and then predicts possible eye movements and cursor paths on the screen and uses these to predict task completion times with respect to different user profiles. The simulator embodies both the internal state of an application and also the perceptual, cognitive and motor processes of its user. It can predict how a person with visual acuity v and contrast sensitivity s will perceive an interface or a person with grip strength g and range of motion of wrist w will use a pointing device. It is used in Monte Carlo simulation and predicted a set of rules relating users’ range of abilities with interface parameters. The rules have been formulated in a set of web services that can dynamically adjust font size, cursor size, colour contrast, audio volume (for DTV systems) and spacing between interface elements (Figure 1).

Interface designers have used the simulator for improving their designs. Figure 2a and 2b demonstrate such an example. In figure 2a, the font size was smaller and the buttons were close enough to be missed clicked by a person with tremor in hand. The designer chose the appropriate font type (Tiresias in this case) and size and also the inter-button spacing through simulation. As Figure 2b shows, the new interface remain legible even to moderate visually impaired users, the inter-button spacing is large enough to avoid missed-clicking by moderate motor impaired users. In figure 2b the purple lines show simulated cursor trajectories of users with tremor in hand.

a. Initial interface |

b. Changed Interface with simulation of medium visual and motor impaired profile |

A demonstration version of the simulator can be downloaded from http://www-edc.eng.cam.ac.uk/~pb400/CambridgeSimulator.zip A demonstration version of user modelling web services can be found at the following links.

- User Sign Up: This application creates a user profile, http://www-edc.eng.cam.ac.uk/~pb400/CambUM/UMSignUp.htm

- User Log In: This application predicts interface parameters and modality preference based on the user profile, http://www-edc.eng.cam.ac.uk/~pb400/CambUM/UMLogIn.htm

- Adaptation Example: This application renders a single webpage differently based on user profiles. http://www-edc.eng.cam.ac.uk/~pb400/CambUM/UMStyleSelect.htm

- Video demonstration: http://youtu.be/MiYp-d6rSXM

The simulator and user modelling web services were validated [2, 3] through a series of user trials by measuring eye gaze tracks, pointer movements and audiogram of people with various degrees of visual, hearing and motor impairment. The system has also contributed to standardize user models through EU VUMS cluster [4].

Conclusions

This paper presents a new type user model considering people with different range of abilities implemented through a simulator and a set of web services. The simulator complements existing user centred design processes and helps designers to understand, visualize and measure effect of impairments on interaction. The web services adapt interfaces across different devices and types of users. The system is validated through a series of user trials [2, 3] confirming its usefulness for users with different range of abilities.

References

- Anderson J. R. and Lebiere C. "The Atomic Components of Thought." Hillsdale, NJ, USA: Lawrence Erlbaum Associates, 1998.

- Biswas P. and Langdon P., Developing multimodal adaptation algorithm for mobility impaired users by evaluating their hand strength, International Journal of Human-Computer Interaction 28(9), 2012

- Biswas P., Langdon P. & Robinson P., Designing inclusive interfaces through user modelling and simulation, International Journal of Human Computer Interaction 28(1), 2012 Print ISSN: 1044-7318

- EU VUMS Cluster, Availabe at: http://www.veritas-project.eu/vums/?page_id=56, Accessed on: 12/12/2012

- John B. E. and Kieras D. "The GOMS Family of User Interface Analysis Techniques: Comparison And Contrast." ACM ToCHI 3 (1996)

- John B. E.(2010), Reducing the Variability between Novice Modelers: Results of a Tool for Human Performance Modelling Produced through Human-Centered Design. Behavior Representation in Modelling and Simulation (BRIMS), 2010

- Newell A. "Unified Theories of Cognition." Cambridge, MA, USA: Harvard University Press, 1990.

- Quade M. et al., Evaluating User Interface Adaptations at Runtime by Simulating User Interaction, British HCI 2011.

- The GUIDE Project, URL: http://www.guide-project.eu/, Accessed on 23rd March 2013.

- The SUPPLE Project, URL: http://www.cs.washington.edu/ai/supple/ , Accessed on 23rd March 2013

- WAI Guidelines and Techniques, Available at http://www.w3. org /WAI/guid-tech.html, Accessed on 4th December, 2012