Minutes

Attendees

- Nagesh Kharidi, Openstream

- Jim Barnett, Genesys

- Debbie Dahl, Conversational Technoogies

- Michael Liguori, What Are Minds For, Inc

- Holger Banski, Bosch

- Craig Campbell, iSpeech

- Yoshiaki Kozaki, NTT-AT

- Antonio Teixeira, DETI/IEETA

- Noreen Whysel, IA Institute

- Bev Corwin, IA Institute

- Sheau Ng, NBCUniversal

- Wei-Yun Yau, Institute for Infocomm Research

- Jens Bachmann, Panasonic

- Ram Bojanki, Panasonic

- Ryosuke Aoki, NTT

- Masaki Umejima, JSCA and Keio University

- Masao Isshiki, W3C/Keio

- Kaz Ashimura, W3C/Keio

- Michael Johnston, AT&T

- Raj Tumuluri, Openstream

- Hari Saravanan, Openstream

- Amy Neustein, Linguistic Technology Systems

- Myra Einstein, NBCUniversal

- Suresh Ganesan, Cognizant

- Phil Sheehy, Openstream

- Peter Rosenberg, NBCUniversal

Chairs

Debbie Dahl, Raj Tumuluri, Jim Barnett, Kaz Ashimura

Scribe

Debbie, Kaz

Contents

- Day1 (22 July 2013)

- AM1: Introduction, local logistics and demos

- AM2: Overview of existing standards (panel)

- PM1: Building multimodal applications

- PM2: Panel on use cases (following lightning talks and/or demos)

- Masaki Umejima - JSCA and Keio University & Masao Isshiki - ECHONET Consortium

- Craig Campbell - iSpeech, Inc

- Holger Banski - Bosch

- Noreen Whysel - IA Institute

- Ryosuke Aoki - NTT

- Debbie Dahl for Brian Dooner - iVVi Media

- Myra Einstein - NBCUniversal

- Wei-Yun Yau - Institute for Infocomm Research

- Yoshiaki Kozaki - NTT Advanced Technoogy Corporation

- AM1: Discussion on new directions

- AM2: Further discussion on selected new directions

- PM1: Further discussion on selected new directions (contd.)

- PM2: Hands on session - No minutes from this session

Summary of Action Items

[NEW] ACTION: kazuyuki to put together a page of resources for mmi [recorded in http://www.w3.org/2013/07/23-multimodal-minutes.html#action01][NEW] ACTION: raj to organize webinar on service registration and discovery for mmi applications [recorded in http://www.w3.org/2013/07/mmi/minutes.php#action02]

[NEW] ACTION: kaz to send information about subscribing to mailing list [recorded in http://www.w3.org/2013/07/mmi/minutes.html#action03]

Day 1

- Morning Chair:

- Raj Tumuluri

Introduction, local logistics and demos

- Scribe:

- Kaz Ashimura

Raj Tumuruli on Introduction and Logistics - Openstream

raj: explains logistics

Suresh Ganesan - Cognizant

[Slides TBD]

suresh: health care demo

suresh: information comes while driving

... also various devices and modality channels

at: speech/gesture control

raj: how to handle multi devices?

at: some ideas using the MMI Architecture

raj: is the speech modality server-side?

at: no, client-side

... remote server is also available

raj: does the browser handle the speech data?

at: no, the OS handles it

... e.g., Win8 has that capability

jim: special model for recognition??

at: one of the project members creates the model

liguori: how much data?

at: 100 hours

jim: just adding recorded data is not useful, at some point the accuracy will rather go down

at: gather data using online system

... very complicated and time-consuming effort

Deborah Dahl - Conversational Technologies

Antonio Teixeira - DETI/IEETA

(Break)

Overview of existing standards (panel)

MMI Architecture (Debbie Dahl)

[Slides]

ddahl: no transport mechanism specified

... Modality Components only communicate with the Interaction Manager

... their functionality is exposed only through Lifecycle events

... standard infor and app-specific info

holger: where should the knowledge based at?

jim: two places

... in your MC itself or IM

... upto your application

... IM can contain the context

... and MC specific info could be stored at each MC

- SCXML (Jim Barnett)

[Slides]

jim: what a "Multimodal

Application" is like?

... unpredictable state for application

... sub-stateful and cross-stateful

... not a simple event loop

... JavaScript+HTML will get ugly

... State Chart XML

... based on Harel State Chart and UML state machine

diagrams

... much industry background

... basic concept

... states, events and transitions

... (sample SCXML code)

... shopping and checkout

... datamodel is pluggable

... (another SCXML sample code)

... conditions on transitions

... (third SCXML sample code)

... two different checkout states

... executable content

... no shipping charges for goldCheckout

... (fourth SCXML sample code)

... checkoutDone and send report

... compound (nested) states

... parallel states

... (fifth SCXML sample code)

... IM is SCXML

... keep MCs independent and integrate them using IM

... e.g., voice and haptic sides should be separate

sheau: question on parallel states processing

jim: algorithm for processing parallel states is strictly defined

at: fusion needs a second IM?

jim: you can use any

combination

... e.g., a specific IM could be used just for fusion

EMMA (Michael Johnston)

[Slides]

michaelJ: editor of EMMA

spec

... multimodal interactive systems

... EMMA

... Multimodal Architecture

... EMMA Example: flights from Boston to Denver

... Annotations

... Multimodal EMMA Result

... Multimodal Interpretations derived from multiple

modalities

... tomorrow we'll have future use cases

liguori: multiple results?

michaelJ: for example, you ask

for N-best

... and get multiple results from the recognition server

... and can choose one of them

... the detail depends on each implementation

... some server can provide lattice structure

raj: what's the best practice for

timestamps

... what if different timezones?

michaelJ: you don't know the real

time to integrate multiple timezones

... you can ask for client's time

jim: that's the arguement for fusion use case

michaelJ: multimodal fusion is

done by IM

... good topic for EMMA issues

jim: you have multiple devices

and the clocks are not synchronized

... that is the worst case

sheau: you could have time shift capability

<dadahl> issue: revisit time-keeping in EMMA, for example, how to deal with time zones

<trackbot> Created ISSUE-226 - Revisit time-keeping in EMMA, for example, how to deal with time zones; please complete additional details at <https://www.w3.org/2002/mmi/Group/track/issues/226/edit>.

sheau: but that is a mess

jim: if we use satellite connection, there might be time delay

michaelJ: we can provide a genesis of timestamp

jim: the first thing is

establishing connection, and the second should be time

synchronization

... between multiple devices

kaz: will also talk about that issue tomorrow

Related standards and technologies (e.g. HTML5, Ajax) (Kazuyuki Ashimura)

[Slides]

- Scribe:

- Debbie Dahl

kaz: various methods for web and

device integration

... http query string + CGI

... for server-side solutions

... html client

... plugins are a proprietary approach

... Ajax is another approach

... more recently, html5 + javascript API's, using Web

API's

... more recently, html5 + javascript API's, using Web

API's

... about two years ago, Web Intents came into the picture,

both device and server need web server capability

... all the methods have some of the same questions --

difficulties with defining API's, integrating distributed

services

... MMI Architecture and EMMA make inter-modality cooperation

easier

... in that case we don't have to care about the details of how

to implement the communication; components can be implemented

by different companies.

... for example, the interoperability test from the MMI

Architecture recommendation

jimB: the big question is what to

do next? what would other people like to work on?

... clearly we have to think about representing time in more

detail

debbie: what use cases have people been thinking of?

sheau: how can we maintain

experience in time-shifted situations?

... for example, pausing and storing up tweets

... how to deal with on-demand situations

... how to recreate real-time experience?

masao: interested in home networking, other modalities like eye-contact

raj: distributed multimodal

systems, for example a system is independent (like your phone)

but then it becomes paired with an automobile

... and becomes part of a larger system

jim: existing architecture is

very general, but needs more detail

... is one use case to replay events in real time?

sheau: would like to recreate the experience of watching in real time

jim: was probably some work in the 80's on the logic of branching time

amy: would be useful for legal

and forensic purposes

... to have some more details on time

WY: would the problems of

interoperability be affected by individual

implementations?

... different people might have different ways of partitioning

system

jim: in SCXML, there might be differences in the implementation of the error handling, or application-specific part of the system

wy: how do we make the experience consistent?

jim: an industry could agree on principles of error handling

wy: without this happening, you

might not have a consistent user experience

... the IM could be customized for different industries

debbie: two comments, one is that it's hard to have hard standards around UI things, but there could be best practices, and secondly, these best practices could be worked out in a business group, which we will talk about more tomorrow

kaz: right. we can do the discussion within the MMI WG, but could be create a specific BG. BTW, part of the answer to wei-yun, the detail of TV/video capability should be handle using DLNA commands, and the IM should concentrate on application lifecycle

jim: IVR's were very competitive at one time, but there was an advantage to standardizing VoiceXML, and there was still room for competition

sheau: if we have hidden anchor

points is that of use to story-telling part of media

... you might be able to skip certain parts of the story

debbie: want an application that

remembers when I fell asleep

... would like best practices for structuring

application-specific parts of an event (data tag)

sheau: an example of metadata from a movie might be sentiment, you could add sentiment labels for specific parts of a movie, for example

amy: sentiment analysis cries out for standardization, looked at user-generated content for restaurant reviews, for example, star ratings were different from actual content of review

raj: if the star rating is different from the text, which one is more accurate?

amy: first decide if the overall

review is positive or negative

... for example, looking at disclaimers

... uses text to decide what the true sentiment is

(Lunch)

- Afternoon Chair:

- Jim Barnett

Building multimodal applications

- Scribe:

- Kaz Ashimura

Demonstration of tools and techniques (Nagesh Kharidi, Openstream)

[slides TBD]

nagesh: Cue-Me toolkit for

multimodal applications

... SCXML program

... Camera Component

... Invoke the voice component to play a prompt

... speech capability could be implemented locally or on the

server-side

... (starts the demo)

... Cue-me Studio

raj: don't need to know the detail of each device

liguori: local or remote?

nagesh: battery is an example of local info

sheau: is video also availbl?

raj: yes

myra: speech rec by AT&T?

raj: could be any engine

dadahl: how hard to integrate another speech engine?

raj: no big difference

... abstraction is defined by MMI Architecture

amy: how many different

environment?

... has been done already?

... different hospitals/countries?

raj: already done

amy: next step for data mining using standard architecture?

raj: not only text-based search but also image-based search is possible

wy: privacy issue?

raj: conformant with laws

... you can take a picture and do a search using that

liguori: search could be done within secure area

raj: Cue-Me is not open-source but open implementation

(Break)

Panel on use cases (following lightning talks and/or demos)

Masaki Umejima - JSCA and Keio University & Masao Isshiki - ECHONET Consortium

[Umejima's Paper, Isshiki's Paper, Slides]

liguori: development center?

masao: actually, certification center

ddahl: ECHONET?

umejima: all the CE devices speaks ECHONET

Craig Campbell - iSpeech, Inc

slide TBD

<masao> http://sh-center.org/en/

craig: TTS and Speech Rec

<masao> http://www.echonet.gr.jp/english/index.htm

<masao> sdk on the site

<inserted> slides TBD

craig: new use cases:

entertainment, mobile, elearning, etc.

... technical challenges

... standards & HTML5

... impact of mature standards on speech technology, e.g.,

CoiceXML, SSML, SRGS and MRCP

... Talkz solution

... text and speech

... available for iOS, Android, Windows and Blackberry

... multimodality for universal accessibility

<masao> http://sh-center.org/en/

<masao> http://www.echonet.gr.jp/english/index.htm

Craig Campbell - iSpeech, Inc

craig: TTS and Speech Rec

<masao> sdk on the site

<inserted> slides TBD

craig: new use cases:

entertainment, mobile, elearning, etc.

... technical challenges

... standards & HTML5

... impact of mature standards on speech technology, e.g.,

CoiceXML, SSML, SRGS and MRCP

... Talkz solution

... text and speech

... available for iOS, Android, Windows and Blackberry

... multimodality for universal accessibility

Holger Banski - Bosch

holger: 4 business sectors:

automotive, industrial, energy/building, consumer goods

... MMI Components for cars: head unit, head-up display,

instrument cluster, eye tracking, gesture control, haptic

input/feedback, speech recognition, TTS, AR glasses, tablet,

smartphone

... UI framework

... layered structure including interaction manager and service

manager

... HTML5-based UI framework

... based on standard Web technologies

masaki: your concept is similar

to Echonet approach :)

... in the future, all automotives will speak IP?

holger: think so

... in-car network could be also going to become IP-based

... managing everything using standard connection would be a

good idea

amy: moderate way to protect your

privacy on highway, etc.?

... is there anyway to shut it off?

holger: personally not sure

jim: you can shut it off for some

use

... people started to use video camera to record everything

amy: what about driver-less car?

raj: how is the IM implemented?

holger: not one IM

... would like to use this framework for automation as well

kaz: regarding Amy's question, would like to hear opinions on how to handle privacy within EMMA 1.1

Noreen Whysel - IA Institute

noreen: provide framework for

development

... test case: Web site redesign

liguori: geting involve?

noreen: Web page is available

craig: is this proprietary?

noreen: open

Ryosuke Aoki - NTT

ryosuke: Information in disaster

situation

... how to get necessary information whenever you want?

... UI proposal

<Masaki> Big problem in Japan's mobile phone is that device's interface is locked by each operator

ryosuke: screen structure

... recognize each component within the whole HTML displayed on

the screen

... iframe vs. HTML5

... trimmed info

... mashup

jim: what about audio and movie?

ryosuke: currently just handling

text and image

... in the future, would like to handle movie, etc. but there

is copyright issue

Debbie Dahl for Brian Dooner - iVVi Media

ddahl: interactive eBooks

... can talk with Brian about the detail tomorrow

raj: user initiated?

ddahl: system can highlight

specific text whose audio is being played

... the audio speech could be generated by TTS

Myra Einstein - NBCUniversal

myra: Synchronized Television

Ecosystem

... Production/Distribution Ecosystem

... sTVe Ecosystem

... twitter, etc. could be integrated

... using HTML5, CSS3, etc.

... IP layer and semantic standard

... used for non-HTML platform as well

... smartTV ACR use case

... Mobile/Tablet use case

... benefits

... open ecosystem

ddahl: similar to our use

case

... we don't do automatic content recognition, though

jim: do we want standard for this kind of timeline?

myra: useful to handle video stream

sheau: comparing to Debbie's use case, this use case need synchronization

jim: in case of information

coming from different MCs

... identifying order of multiple inputs would be useful in

some cases

kaz: time sensitive vote using digital TV could be a use case

jim: some king of game app as well

sheau: time can be abstracted

out

... user would be able to handle it

... all the devices could be mashed up based on some specific

timeline

... a primitive use case is playing DVD

liguori: temporal?

myra: relative to show

raj: offsetting the media

sheau: additional advantage for content providers

myra: we could do that

amy: interesting to hear that

Wei-Yun Yau - Institute for Infocomm Research

wy: under Ministry of Trade and

Industry, Singapore

... we have efficient text search but not for content

search

... a lot of programming in Malay in Singapore, but not

everyone speaks it

... how do you put this together to allow people to watch what

they want

... how to enjoy related content seamlessly

... how to avoid duplicates

... challenges -- automated content discovery, user preference

modeling, personalized recommendation, attractive

presentation

... how can the MMI framework and EMMA be used?

... news video analytics

... low level analytics, high level analytics,

applications

... need to know social interactions, location, demographics

and device of viewers as well as information about different

types of content, including structured and unstructured

content

... one application is personalized recommendations

... would like to understand how this framework can be

applied.

masaki: what about copyright issues?

wy: not manipulating original

content

... we digitize content for research

... can also do visualizations

... e.g. put information on a timeline

Yoshiaki Kozaki - NTT Advanced Technoogy Corporation

yoshiaki: large-scale system of

persistently connected devices using WebSockets

... use cases include real-time messaging, server push

notification, and M2M cloud

... each WebSocket server can handle up to 300,000

clients

... would like to standardize MMI protocols over WebSocket for

interoperability

jim: if you were to have a controller in the middle layer, it looks like MMI using WebSockets for transport

craig: what about scaling?

yoshiaki: the biggest problem is

memory

... but we can have scalable systems

(Day1 ends)

Day 2

- Morning Chair:

- Debbie Dahl

Discussion on new directions

- Scribe:

- Kaz Ashimura

Discovery and registration of components (Raj Tumuluri for Helena)

[Slides]

sheau: question on WG's activity

dahl,jim,raj,kaz: explains

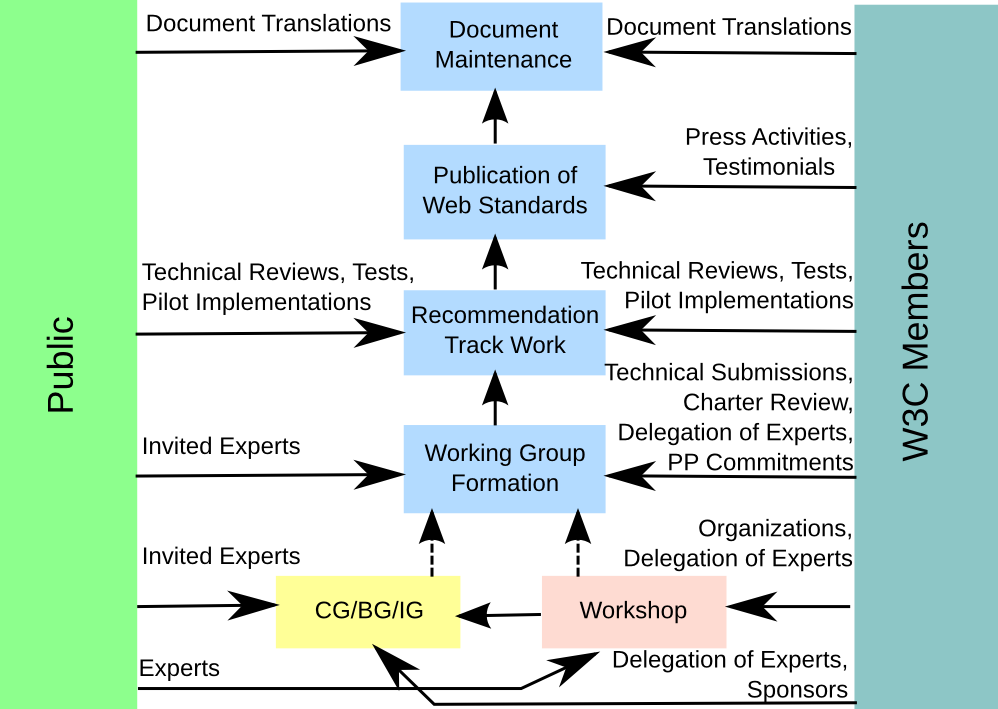

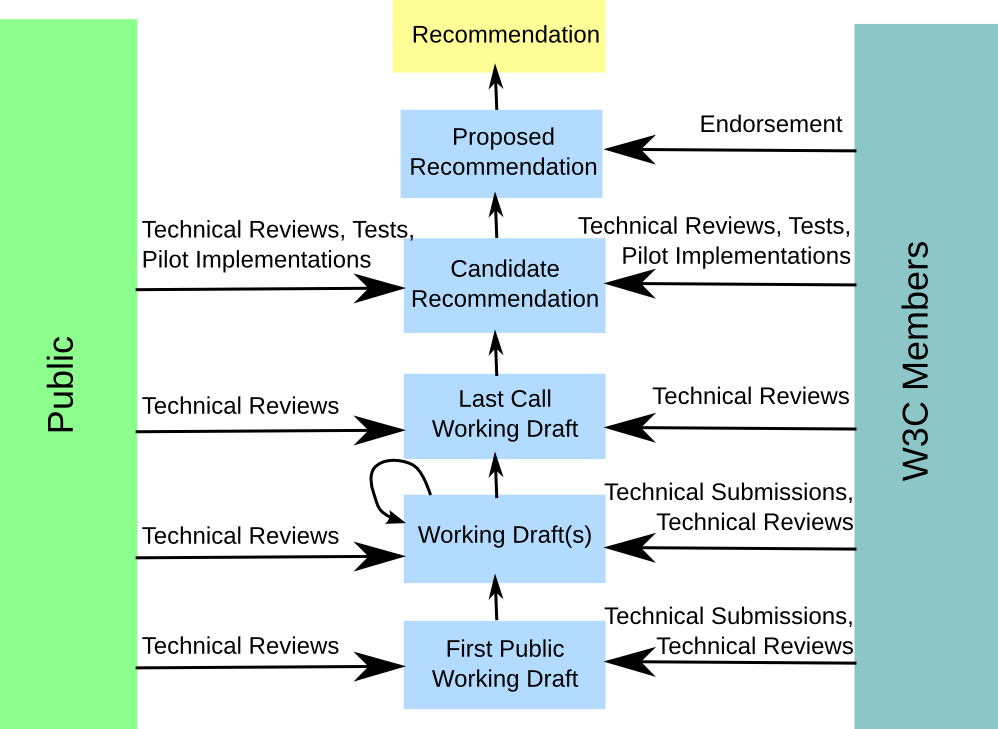

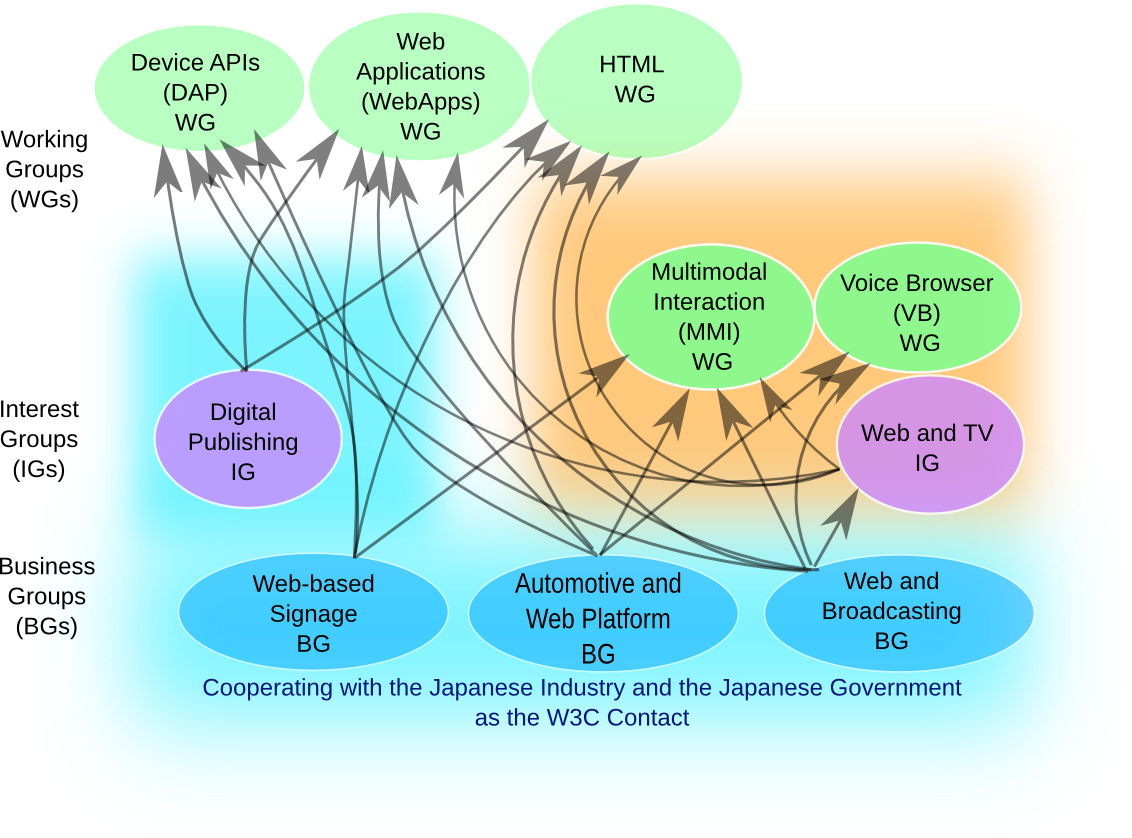

W3C Groups

wy: question on the difference between WG and IG/BG/CG

kaz: explains that only WGs can generate W3C Rec track document

W3C Recommendation Track Documents

Relationship between W3C Groups

Future EMMA use cases (Michael Johnston)

[Slides]

wy: user biometrics?

michaelJ: EMMA payload could be

biometrics information

... the question is if there are things we can do to make it more

useful?

... speech rec results and biometrics info can be packed

wy: relationship with OASIS's work?

ddahl: would like to see the other specifications on biometrics and think about compatibility with them

michaelJ: ideally IM should concentrate on dialog management but should not handle concrete data

wy: think there might be some clarification by OASIS

MMI.next (Kazuyuki Ashimura)

kaz: mentions menlo park model and

secure vault

... and then explains some idea on MMI.next

... MMI over WebSocket library

... and realtime connection between IM and MCs

sheau: three IMs?

ddahl: WebSocket connection?

kaz: 30 time faster than polling

TV Anywhere (Peter Rosenberg)

[Slides]

peter: presents slides

... MVPD or DVD

... Netflix, Amazon, etc.

... multiple devices and multiple services

... authentication and authorization by third party

... many scenarios

... consumer may use more than one services

kaz: question on quality of

content

... can I watch updated clearer (e.g., HD remastered) version of a

movie if I have a contract on low resolution version?

peter: don't think any clear consistency

sheau: consumer may have contracts

with several providers, e.g., Netflix and hulu

... service is on-demand basis

<bevcorwin> And integration of free, open content like YouTube? Self publishers of content?

(Break)

Further discussion on selected new directions

Preliminary discussion

ddahl: interested topics?

- Service/Device Discovery

- Specific Industry Snapshots (Use Cases)

- Related Standards such as Biometrics (FIDO, ECHONET Lite)

- Output (EMMA)

- Timing - Cross-device, Time zones

- HTML5 Integration

- Streaming

Prioritization

- Service/Device Discovery: 9

- Specific Industry Snapshots (Use Cases): 5

- Related Standards (FIDO, ECHONET Lite): 3

- Output (EMMA): 7

- Timing - Cross-device, Time zones: 4

- HTML5 Integration: 9

- Streaming: 5

ddahl: Let's start with "Service Discovery"

Service/Devices Discovery

ddahl: the MMI WG published a WG Note

on discovery

... public information like flight info at airports...

jim: walking around within home and devices follow us

Use Cases:

- Devices become part of group in work place

- Second screen

- Sensors

- Medical devices

Requirements for Service Discovery:

- Very transient services

- Publishing capabilities

= Publisher/Subscriber model

= Semantic matching

= Capability, Availability, Privilege

- Web/Cloud service discovery

- Is there a service discovery module?

- Service discovery on top of device discovery

- Clarify device vs service discovery

- Role of Web Service Description Language (WSDL)

- Clarify API vs Capability description

- Brokering/Privilege

- User-initiated vs App-initiated

- Semantic Web services

- We can't define APIs or capabilities but we can define where to go (like DNS)

- Need to coordinate with other groups wrking on Device APIs

- Relation to Web Intents?

- Role of registry service?

- Look at DLNA and ECHONET

- IPTV document

- Web&TV IG Home Network TF, Media APIs TF - should coordinate

ddahl: who is interested?

- Raj, Ram, Kaz

- Sheau (need to check about possible IP issue)

How to move forward?

- Possible joint task force between the MMI WG and the Web&TV IG

ddahl: good discussion on

discovery

... will talk about the other topics after lunch

(group photo)

(Lunch)

- Afternoon Chair:

- Kazuyuki Ashimura

PM1: Further discussion on selected new directions (contd.)

- Scribe:

- dadahl

html5 integration

kaz: use cases

... second screen

debbie voice-enabled personal assistant

myra: connected TV

ram: browser that takes multiple inputs

...input from other modalities, like cameras

jimB: this is a specific case of the general problem of getting html5 to be a modality component

debbie: what if the browser contains the interaction manager?

sheau: synchronizing across html browsers across different devices

debbie: also synchronizing with non-html displays

masao: compatibility between html5 and Echonet

jimB: in contact centers we have the problem of shared browsers, sort of works, but is proprietary

sheau: our use cases require millisecond coordination

kaz: this also gets into timing

issues

... also processing order issues

debbie: sometimes you would need

really fast communication between the client and the server, so Web

Sockets is a good protocol for that

... fast communication is necessary for games

kaz: sports games, like soccer

debbie: what if it takes 5 seconds to kick?

Myra: found Ajax better than Web Sockets because of scalability and firewalls, has Web Sockets improved in those areas?

ram: we have used Web Sockets in some

of our implementations

... challenge is getting html gui to get adequate performance

without taking up too much cpu

... html 5 is still not there

... javascript is still too slow even with Web Sockets

... no state machine tools for html5, need to use native tools

kaz: like Java or C

... how to make it easy for web developers to use MMI life-cycle

events?

... and SCXML

... some developers prefer to use Javascript

ram: some Javascript frameworks have UI binding

sheau: if someone has UML, can that be turned into SCXML?

jimB: if you have a state machine, yes, but not directly from a class diagram. we tried to stick to the UML semantics in designing SCXML

amy: could you go to classes from SCXML

jimB: you would lose some information

that way because SCXML is more detailed

... you could go from UML to SCXML, you could also take SCXML and

turn it into UML, but couldn't guarantee that it would be the same

SCXML

... the UML would just be a visualization tool

kaz: next steps or action

items?

... Javascript libraries

... there is some work going on in the Japanese chapter

jimB: what could we do with the HTML5 WG and what could we do on our own?

debbie: we could provide pointers to open source libraries

jimB: SCXML is open source

debbie: could provide the mmi library and the emma library from my demo yesterday

raj: Cueme libraries

debbie: put together a web page on the MMI website listing resources

<scribe> ACTION: kazuyuki to put together a page of resources for mmi [recorded in http://www.w3.org/2013/07/23-multimodal-minutes.html#action01]

<trackbot> Created ACTION-347 - Put together a page of resources for mmi [on Kazuyuki Ashimura - due 2013-07-30].

craig: iSpeech would probably be interested but need to find someone more on the technical side

debbie: if we could get the web page together by August 18 I could talk about it during my SpeechTEK session.

Wrap up and plans for future work

kaz: the original point of this

workshop was to highlight W3C standards, especially MMI

standards

... the highest priority was service discovery, also html5

integration, related standards, output, and timing, maybe the MMI

Working Group can publish some WG Notes

... also bring some requirements to EMMA and MMI Architecture

... there is a possibility to create a new business group

debbie: what would be the topic?

kaz: service discovery, industry use cases, related standards, timing issues

sheau: the BG would focus on generating requirements

debbie: industrial requirements for multimodal interaction could be a name

sheau: this would be independent of the WG?

jimB: yes

amy: industry-driven requirements for MMI interaction

debbie: what is the process for a BG?

kaz: (reviews W3C Patent

Policy)

... business group procedure is a bit different -- the IP

commitment is made by each person

... because the BG doesn't create any Recommendation-track

documents

... a WG starts with a charter

... a BG doesn't necessarily start with a charter, it might just

describe some topics of interest

... need at least 5 companies to start a BG

... non-member companies can join a BG, but you have to pay a BG

group fee

... small companies pay $2000, large company pays $10,000 and

individual pays $300 (only for non-member companies)

(some discussion of Patent Policy) the upshot is to consult lawyers before joining BG if concerned

kaz: more webinars?

debbie: more webinars on technical topics, service discovery would be a good topic

raj: should invite industry experts

sheau: have many perspectives, could also invite Web and TV IG people

kaz: joint meeting with MMI and Web and TV at upcoming TPAC meeting

ram: would be interested in participating in service discovery webinar

debbie: everyone should join the MMI public list

<scribe> ACTION:raj to organize webinar on service registration and discovery for mmi applications [recorded in http://www.w3.org/2013/07/mmi/minutes.php#action02]

<trackbot> Created ACTION-348 - Organize webinar on service registration and discovery for mmi applications [on Raj Tumuluri - due 2013-07-30].

<scribe> ACTION: kaz to send information about subscribing to mailing list [recorded in http://www.w3.org/2013/07/mmi/minutes.php#action03]

<trackbot> Created ACTION-349 - Send information about subscribing to mailing list [on Kazuyuki Ashimura - due 2013-07-30].

amy: is there a way to reduce the fee for pre-revenue companies?

kaz: there is an option of a "Startup Level"

(see also the "Membership Fees" page)

debbie: there is also the idea of a community group, which is free

kaz: that is possible

... thanks everyone

(Break)

Hands on session

No minutes from this session.

[Workshop ends]